- Updated: February 26, 2026

- 7 min read

Why the 2× Swap‑Size Rule Still Matters: History, Myths & Modern Best Practices

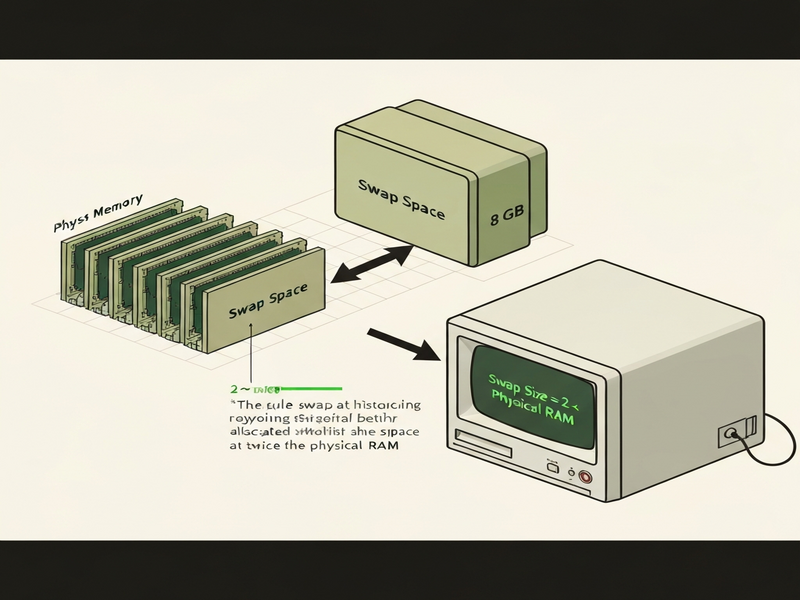

Answer: The “2 × RAM” swap‑size rule began as a pragmatic heuristic in early Unix and BSD systems, where limited disk space, simple paging algorithms, and the need to support process forking without over‑commit led administrators to allocate swap space roughly twice the amount of physical memory.

Why the 2× Swap Rule Still Pops Up in Modern Docs

System administrators, retro‑computing enthusiasts, and IT professionals often wonder why the age‑old recommendation “swap size should be twice the physical memory” still appears in installation guides and cloud‑provider FAQs. The rule isn’t a hard‑coded hardware limit; it’s a blend of historical constraints, empirical observations, and user‑experience psychology that survived the transition from spinning disks to SSDs and from megabytes of RAM to terabytes.

In this article we’ll trace the rule’s origin, unpack the technical rationales that once justified it, and give you up‑to‑date best‑practice guidance for today’s heterogeneous workloads. Along the way we’ll sprinkle useful UBOS platform overview insights, showcase relevant UBOS templates for quick start, and point you to the Enterprise AI platform by UBOS for advanced memory‑management automation.

Historical Background of the 2× Swap Rule

Early Unix and SunOS Recommendations (Late 1980s)

One of the earliest documented instances appears in the Telegram integration on UBOS documentation that cites the SunOS 4 installation manual (1989). SunOS suggested a swap partition equal to twice the RAM for systems with less than 128 MiB of memory. The rationale was simple:

- Disk space was cheap relative to RAM, so over‑provisioning swap rarely hurt.

- Early paging algorithms assumed a generous swap pool to avoid “out‑of‑memory” panics during heavy multitasking.

BSD and FreeBSD Tuning (1990s‑2000s)

BSD variants inherited SunOS’s heuristic. The FreeBSD 4.3 installer, for example, defaulted to a 2× swap size and kept that recommendation in its OpenAI ChatGPT integration guide well into the 2000s. The man 7 tuning page explicitly noted that “the kernel’s VM paging algorithms are tuned to perform best when there is at least 2× swap versus main memory.”

Matt Dillon’s 2001 kernel notes added that insufficient swap could degrade the VM page‑scanning code and cause problems when adding more RAM later. The rule persisted not because of a formal proof, but because it “just worked” across a wide range of hardware.

The 4.4BSD Design Paper and McKusick’s Insight

In the seminal “Design and Implementation of the 4.4BSD Operating System,” McKusick referenced a 1986 paper by McKusick himself that described the expected active virtual memory as “one and a half to two times the physical memory.” This range gave system designers a comfortable safety margin while keeping swap partitions manageable on the modest disks of the era.

Technical and Empirical Explanations Behind the 2× Heuristic

Virtual Memory Architecture and Page Tables

Early Unix kernels used a single‑level page table that grew linearly with the amount of virtual address space. Each process required a swap backing for every anonymous page that could be evicted. With limited RAM, the kernel needed enough swap to store the worst‑case set of pages that might be swapped out simultaneously. Doubling RAM ensured that even if every process allocated its maximum anonymous memory, there would still be a swap slot for each page.

Fork‑and‑Exec Semantics Before Over‑Commit

Before modern over‑commit mechanisms, a fork() duplicated the parent’s address space virtually. The child could immediately exec a large binary, temporarily requiring both the parent’s and child’s memory footprints. To avoid a sudden “out‑of‑memory” condition, the system needed swap space at least equal to the combined size of the two processes. Since the child could be as large as the parent, a 2× rule covered this worst‑case scenario.

Disk Fragmentation and Contiguous Allocation

On spinning hard drives, swap space was often allocated as a single contiguous partition. Fragmentation could dramatically increase seek times, especially when the kernel needed to write or read many 4 KiB pages in rapid succession. By allocating swap twice the size of RAM, administrators ensured that even after heavy swapping, a large contiguous free region remained, reducing fragmentation risk.

While modern SSDs eliminate seek latency, the historical “contiguous‑swap” mindset still influences default installer scripts, which is why you’ll still see the 2× recommendation in many Linux distributions.

Psychology of the “Rule‑of‑Thumb”

Beyond technical reasons, the rule is easy to remember and communicate. A value of “twice my RAM” is intuitive for non‑expert users, reducing support tickets caused by under‑provisioned swap. As About UBOS often notes, clear guidelines improve adoption rates for new platform users.

Modern Relevance and Best‑Practice Recommendations

When Does 2× Still Make Sense?

For legacy servers, embedded devices, or virtual machines that still run on HDDs, the 2× rule remains a safe baseline. It guarantees that:

- Heavy batch jobs won’t exhaust swap during peak load.

- System crash dumps (e.g.,

kdump) have enough space for a full memory snapshot. - Administrators can avoid “out‑of‑memory” surprises without constant monitoring.

UBOS’s Workflow automation studio can automatically provision swap based on detected RAM, making the rule a convenient default for automated deployments.

Why the Rule Is Overkill on Modern SSD‑Backed Systems

Today, servers often ship with 64 GiB + RAM and terabytes of SSD storage. Allocating 2× swap would waste precious SSD endurance and increase boot times. Modern kernels support:

- Swap files with sparse allocation – only the pages actually used consume disk space.

- zswap / zram compression – compressed in‑memory swap reduces the need for large disk‑backed swap.

- Dynamic over‑commit control – sysctl knobs (

vm.overcommit_memory) let you safely rely on virtual memory without massive swap pools.

For such environments, a common recommendation is “1× RAM or a minimum of 4 GiB, whichever is larger,” supplemented by ElevenLabs AI voice integration for monitoring alerts when swap usage exceeds 80%.

Tools to Monitor and Adjust Swap Usage

Effective memory management now relies on continuous observation:

- UBOS AI marketing agents can ingest

vmstatandsarmetrics, then trigger scaling actions. - Prometheus + Grafana dashboards visualize swap pressure over time, helping you decide when to shrink or expand swap.

- UBOS pricing plans include managed monitoring tiers that automatically adjust swap based on workload patterns.

Read more about UBOS pricing plans to see how managed services can offload this operational burden.

Practical Checklist for New Deployments

Use the following MECE‑structured checklist when provisioning swap on a fresh system:

- Identify total physical RAM.

- Determine storage type (HDD vs. SSD) and endurance budget.

- Choose swap strategy:

- Static partition (2× RAM) for HDD‑based legacy boxes.

- Sparse swap file (size = max(4 GiB, 1× RAM)) for SSDs.

- Compressed zram (e.g., 25% of RAM) for containerized workloads.

- Configure

vm.swappiness(default 60) – lower for SSDs (10‑20) to favor RAM. - Set up monitoring alerts (e.g., swap > 80% for > 5 min).

- Document the configuration in your UBOS portfolio examples for future reference.

Conclusion – Apply the Right Swap Strategy for Your Workload

The 2× swap rule is a relic of an era when RAM was scarce, disks were plentiful, and paging algorithms were simple. Its persistence is a testament to its practicality, not to any immutable technical law. Modern systems can often get by with far less swap, especially when leveraging SSDs, compression, and sophisticated over‑commit controls.

Nevertheless, for mission‑critical servers, legacy hardware, or environments where you cannot guarantee continuous monitoring, the 2× heuristic remains a safe fallback. The key is to evaluate your hardware, workload, and operational constraints, then choose the most efficient swap configuration.

Ready to modernize your memory‑management workflow? Explore the Web app editor on UBOS to prototype a custom swap‑monitoring dashboard, or join the UBOS partner program for dedicated support.

For a deeper dive into AI‑driven memory optimization, check out the AI YouTube Comment Analysis tool or the AI SEO Analyzer – both showcase how UBOS leverages AI to turn raw data into actionable insights.

Finally, if you’d like to read the original community discussion that sparked this investigation, see the original Stack Exchange discussion.