- Updated: January 7, 2026

- 2 min read

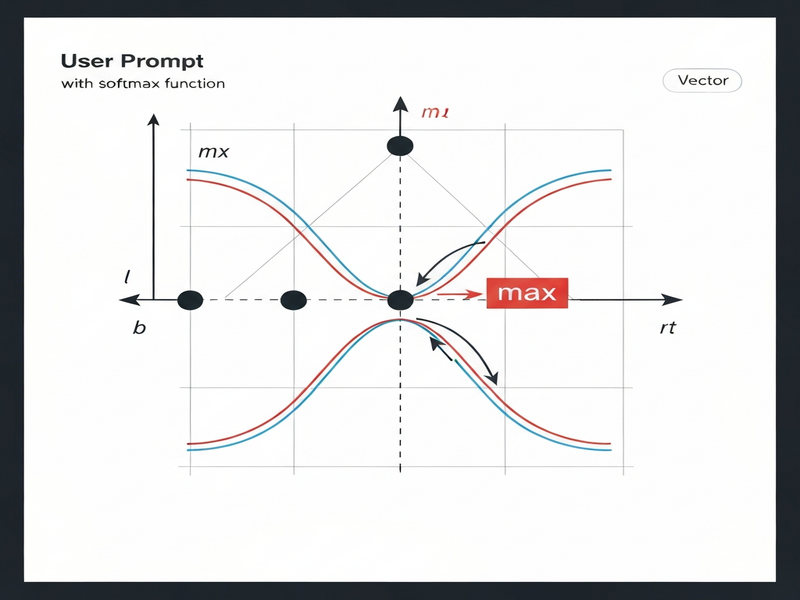

Implementing Softmax from Scratch: Avoiding Numerical Stability Pitfalls

Understanding the Softmax activation function is essential for anyone working with neural networks. In a recent MarkTechPost article, the author walks through a step‑by‑step implementation of Softmax from scratch, highlighting a common numerical stability issue and demonstrating a simple yet effective fix.

The naive implementation directly computes the exponentials of the raw logits and then normalises them. While conceptually straightforward, this approach can cause overflow or underflow when the logits contain large values. The article shows how subtracting the maximum logit from each entry before applying the exponential—known as the max‑subtraction trick—prevents these problems and yields stable, accurate probabilities.

Key take‑aways include:

- Why Softmax is used to convert raw scores into a probability distribution.

- The numerical instability that arises from large or very small logits.

- A robust, vectorised PyTorch implementation that incorporates the max‑subtraction technique.

- How the stable Softmax improves loss calculation and gradient flow during training.

Below is a custom illustration we generated to visualise the Softmax function together with the stability trick:

For a deeper dive, read the original article on MarkTechPost. You can also explore related resources on our site:

By applying the stability fix, developers can ensure their models train reliably, even with extreme input values, and avoid subtle bugs that stem from floating‑point overflow.