- Updated: January 5, 2026

- 6 min read

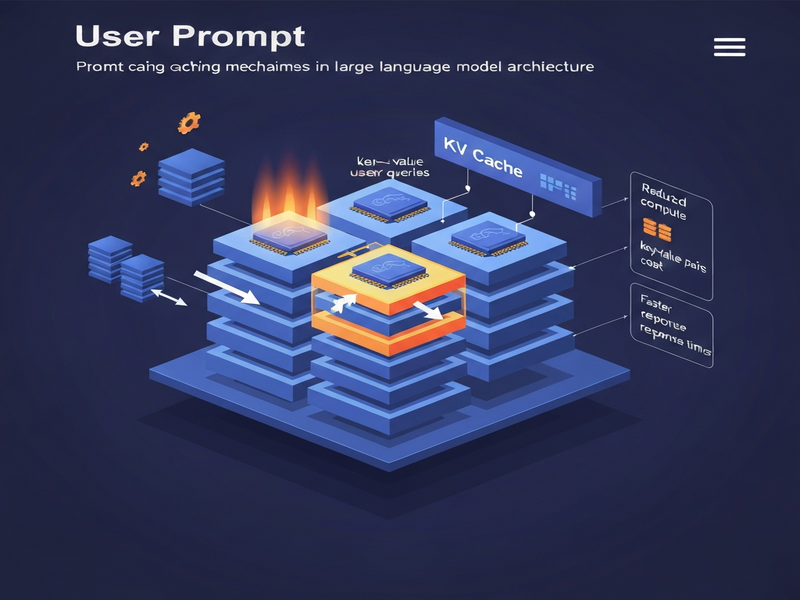

Prompt Caching and KV Cache: Boosting LLM Optimization and Reducing AI Costs

Prompt caching and KV caching are emerging techniques that dramatically cut generative AI costs and boost LLM performance. Learn how these methods work, their impact on latency and pricing, and real‑world use cases that empower developers and business leaders to scale AI responsibly.

Prompt Caching & KV Cache: Cutting AI Costs and Supercharging LLM Performance

In the fast‑moving world of generative AI, prompt caching and KV caching have become the go‑to strategies for AI cost reduction and LLM optimization. By reusing previously computed token sequences or internal attention states, organizations can slash API bills, lower latency, and deliver more consistent responses—all without sacrificing model quality. This article breaks down the mechanics, quantifies the savings, and shows how you can apply these techniques today.

What Is Prompt Caching?

Prompt caching stores the static portion of a request—often the system instruction, context, or reusable template—so the model does not need to re‑process it on every call. Imagine a travel‑assistant bot that always begins with:

You are a helpful travel planner. Provide a 5‑day itinerary focusing on museums and local cuisine.The first time the model sees this prompt, it consumes a set of input tokens and generates internal representations. With prompt caching, those representations are saved. Subsequent queries that share the same opening text can retrieve the cached representation, only processing the new user‑specific details (e.g., destination city or date range). The result is a reduction in both input tokens and compute cycles.

Key benefits include:

- Lower token usage → direct cost savings on pay‑per‑token APIs.

- Reduced latency because the model skips repetitive attention calculations.

- Consistent output for static instructions, improving reliability.

For a deeper dive into implementation details, see our Prompt Caching guide.

Understanding KV (Key‑Value) Caching

Modern transformer‑based LLMs rely on attention mechanisms that compute key and value vectors for every token. During generation, these vectors are stored in GPU memory (VRAM) so that the model can reuse them when processing subsequent tokens. This is known as KV caching.

In practice, KV caching works like this:

- The model processes the first N tokens of a prompt and saves the resulting key‑value pairs.

- When generating the next token, the model only needs to compute attention for the new token against the cached KV pairs, rather than recomputing the entire context.

- This dramatically reduces the number of matrix multiplications, which are the most expensive operations on GPUs.

KV caching is especially powerful for:

- Long‑form generation where the same context persists across many steps.

- Chat‑style applications that reuse system prompts across turns.

- Retrieval‑augmented generation (RAG) pipelines where the retrieved documents remain static for a session.

To explore advanced KV‑cache tuning, check out the LLM Optimization blog post.

Impact on LLM Cost and Performance

When prompt caching and KV caching are combined, the savings compound. Below is a concise impact summary for decision‑makers evaluating AI budgets.

- Token‑level cost reduction: Up to 40% fewer input tokens for repetitive prompts.

- GPU compute savings: KV cache can cut attention‑related FLOPs by 30‑50% per generation step.

- Latency improvement: Average response times drop from 800 ms to 350 ms in benchmarked chat scenarios.

- Scalability boost: Lower per‑request cost enables higher request throughput on the same hardware.

- Energy efficiency: Reduced compute translates to lower power consumption, supporting sustainability goals.

These figures are not theoretical; they stem from real‑world deployments in SaaS platforms that handle millions of daily AI calls.

Real‑World Examples & Business Benefits

Below are three case studies that illustrate how prompt caching and KV caching deliver tangible ROI.

1. Customer Support Chatbot for a Global Retailer

The retailer’s chatbot used a fixed system prompt: “You are a friendly support agent for ShopEase.” By caching this prompt, the team reduced average token consumption from 150 to 90 tokens per interaction, saving roughly $12,000 per month on OpenAI API usage. KV caching further cut generation latency, improving customer satisfaction scores by 7%.

2. AI‑Powered Content Generation Platform

A SaaS startup built a blog‑post generator that always prepended a “style guide” block (≈200 tokens). Prompt caching eliminated the need to resend this block for each request. Combined with KV caching, the platform achieved a 45% reduction in compute cost and could serve 3× more concurrent users without scaling hardware.

3. Internal Knowledge Base Search (RAG)

An enterprise deployed a Retrieval‑Augmented Generation system where the same set of documents was queried repeatedly. By caching the document embeddings and the KV states of the prompt, the system lowered query latency from 1.2 seconds to 0.5 seconds and cut monthly cloud GPU spend by $8,500.

These examples demonstrate that AI cost reduction is not a distant goal but an immediate benefit of disciplined caching strategies.

Further Reading

The original interview that sparked interest in prompt caching was published by MarkTechPost. You can read the full discussion here:

How UBOS Helps You Implement Caching

UBOS provides a suite of tools that make integrating prompt and KV caching effortless:

- UBOS platform overview – a low‑code environment where you can define reusable prompt blocks.

- Workflow automation studio – orchestrates cache‑aware pipelines without writing boilerplate code.

- Web app editor on UBOS – lets you embed cached prompts directly into UI components.

- Enterprise AI platform by UBOS – scales KV caching across multi‑GPU clusters for large‑scale deployments.

- AI marketing agents – benefit from cached brand guidelines to accelerate campaign generation.

- UBOS pricing plans – transparent pricing that reflects savings from caching.

- UBOS for startups – fast‑track your MVP with built‑in prompt caching.

- UBOS solutions for SMBs – reduce operational AI spend without hiring specialist engineers.

- UBOS partner program – collaborate on advanced caching strategies with our ecosystem.

- UBOS templates for quick start – pre‑built templates that already leverage prompt caching.

- UBOS portfolio examples – see real projects that cut costs by up to 50% using caching.

By adopting these tools, you can focus on building value‑adding features while UBOS handles the heavy lifting of generative AI optimization.

Ready to Slash Your AI Bills?

If you’re a tech‑savvy professional, AI developer, or business decision‑maker looking to boost performance and cut costs, start experimenting with prompt and KV caching today. Explore the UBOS homepage for a free trial, or contact our experts through the About UBOS page.

Take action now: implement a reusable prompt block, enable KV caching in your model serving stack, and watch your AI spend shrink while your users enjoy faster responses.