- Updated: February 27, 2026

- 6 min read

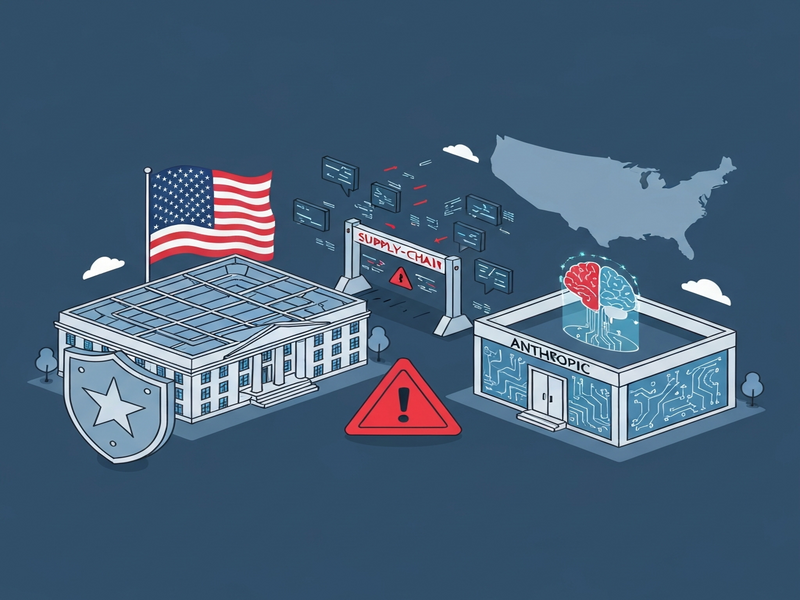

Pentagon Designates Anthropic as Supply‑Chain Risk, Restricts AI Use in Defense Contracts

The Pentagon has officially designated Anthropic as a supply‑chain risk, barring all defense contractors from using its AI models and forcing a rapid shift in the U.S. government’s AI procurement strategy.

Pentagon’s decisive move against Anthropic

On February 27, 2026, Defense Secretary Pete Hegseth announced that Anthropic, the creator of the Claude family of large language models, is now classified as a “supply‑chain risk” for the Department of Defense (DoD). The designation immediately prohibits any DoD contractor, supplier, or partner from engaging in commercial activity with Anthropic, effectively cutting off the company’s access to federal defense contracts.

Background: Anthropic’s rise and recent AI policy turbulence

Anthropic, founded in 2020 by former OpenAI executives, quickly became a leading AI contender with its Claude series, praised for “helpful” and “harmless” outputs. However, the company’s commitment to “effective altruism” and its refusal to grant unrestricted model access to the Pentagon sparked a policy clash.

In the past year, the U.S. government has tightened AI governance, issuing directives that demand full, unrestricted access to AI models for “all lawful purposes,” including autonomous weapon systems and mass‑surveillance capabilities. Anthropic’s stance—refusing to waive its safety guardrails—positioned it at odds with the administration’s aggressive AI‑for‑defense agenda.

The standoff: From negotiations to a supply‑chain blacklist

The Pentagon’s ultimatum arrived after a week of intense negotiations. The DoD demanded that Anthropic:

- Allow the Department of Defense to use Claude for any lawful purpose, without additional safety constraints.

- Provide source‑code access for integration with autonomous systems.

- Accept the possibility of the Defense Production Act being invoked.

Anthropic’s leadership responded with a public statement emphasizing the need for “ethical guardrails” and warned that unrestricted use could lead to “unintended harmful outcomes.” The deadline—Friday, 5:30 PM EST—passed without a compromise, prompting Secretary Hegseth to invoke the supply‑chain risk designation.

Implications for defense contracts and AI supply‑chain security

The designation carries immediate and far‑reaching consequences:

- Contract cancellations: Ongoing contracts that rely on Claude for data analysis, predictive maintenance, or decision‑support will be terminated within 30 days.

- Vendor realignment: Companies such as Palantir, AWS, and other contractors that embed Claude into their platforms must replace the model with alternatives (e.g., OpenAI’s GPT‑4 or Google’s Gemini).

- Supply‑chain audit acceleration: The DoD will expand its AI supply‑chain risk assessment framework, scrutinizing all third‑party AI providers for “national‑security‑relevant dependencies.”

- Innovation slowdown: Start‑ups and research labs that previously partnered with Anthropic for defense‑related pilots may face funding gaps, potentially slowing the pace of AI innovation in the defense sector.

These moves echo the broader government push to “domesticate” AI capabilities, ensuring that critical models remain under U.S. control and are free from foreign influence or corporate veto power.

Secretary Hegseth’s statement

“Our position has never wavered: the Department of War must have full, unrestricted access to Anthropic’s models for every lawful purpose in defense of the Republic. Instead, Anthropic has chosen duplicity, cloaking corporate virtue‑signaling in the rhetoric of ‘effective altruism.’ This is unacceptable, and we will protect our warfighters from being held hostage by ideological whims.” – original news article

Broader impact: What this means for AI regulation in the United States

The Pentagon’s action is a watershed moment for AI governance, signaling that the U.S. government is prepared to use supply‑chain risk tools—traditionally reserved for foreign‑origin hardware—to police domestic AI firms.

Key takeaways for the industry:

- Regulatory precedent: Future administrations may adopt similar designations for other AI providers that resist government access, creating a de‑facto “AI compliance checklist.”

- Shift toward open‑source models: Enterprises may accelerate adoption of open‑source alternatives (e.g., LLaMA, Falcon) that can be audited and modified without corporate gatekeepers.

- Increased demand for AI‑secure platforms: Companies like UBOS platform overview are positioned to help organizations build compliant AI pipelines, offering built‑in governance, audit trails, and secure model hosting.

- Strategic partnerships: Defense contractors are likely to partner with firms that provide “government‑ready” AI stacks, such as those offering AI marketing agents that can be repurposed for secure internal communications.

Future outlook: How the AI ecosystem may evolve

While the immediate fallout is disruptive, the longer‑term trajectory points toward a more compartmentalized AI landscape:

- Segmentation of AI services: “Defense‑grade” models will be isolated from commercial offerings, with strict licensing and audit requirements.

- Rise of sovereign AI clouds: Government‑backed cloud providers may host vetted models, reducing reliance on private AI vendors.

- Enhanced compliance tooling: Platforms that integrate UBOS pricing plans for compliance modules will see heightened demand.

- Policy feedback loops: Industry groups will lobby for clearer guidelines, potentially leading to a federal AI “supply‑chain security act.”

For tech‑savvy professionals and AI enthusiasts, staying ahead of these regulatory currents is essential. Monitoring the Pentagon’s next steps—and the broader policy environment—will be critical for anyone building or deploying AI in high‑stakes sectors.

Conclusion

The Pentagon’s designation of Anthropic as a supply‑chain risk marks a decisive escalation in the U.S. government’s effort to control AI technology for national security. By leveraging supply‑chain risk tools, the DoD has sent a clear message: AI models must be fully accessible, auditable, and free from corporate constraints that could impede defense objectives. The ripple effects will reshape vendor relationships, accelerate the adoption of open‑source alternatives, and drive demand for secure AI platforms—areas where solutions like Enterprise AI platform by UBOS can provide a competitive edge.

As the AI policy landscape continues to evolve, stakeholders should prioritize compliance, diversify model providers, and invest in platforms that embed security and governance at the core. The next chapter of AI in defense will be defined not just by technological breakthroughs, but by the ability to navigate an increasingly regulated supply‑chain environment.

Stay informed on AI policy shifts and learn how to future‑proof your AI initiatives with UBOS’s suite of tools. Explore our UBOS templates for quick start and accelerate compliant AI development today.