- Updated: February 27, 2026

- 6 min read

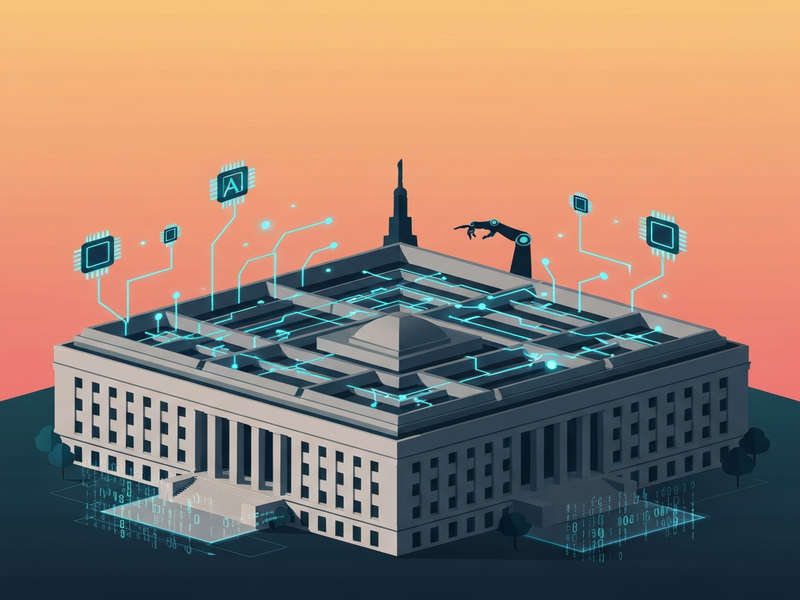

Anthropic vs Pentagon: AI Ethics, Autonomous Weapons and Policy Clash

Anthropic is standing firm against the Pentagon’s ultimatum to loosen its AI guardrails, refusing to allow unrestricted “any lawful use” of its models for mass surveillance or fully autonomous lethal weapons.

The Pentagon‑Anthropic Standoff: A Quick Overview

The U.S. Department of Defense has issued a stark ultimatum to AI pioneer Anthropic: grant the military unrestricted access to its large‑language models—including for mass surveillance of U.S. citizens and fully autonomous lethal weapons—or be labeled a “supply‑chain risk” and lose billions in defense contracts. Anthropic’s CEO Dario Amodei has publicly rejected the demand, citing ethical red lines that “threats do not change our position.” This clash has ignited a broader debate about AI governance, the role of private tech firms in national security, and the future of autonomous weapons.

Why the Pentagon Is Pressing AI Companies

Since 2023, the Pentagon’s Enterprise AI platform by UBOS‑style initiatives have accelerated, aiming to embed generative AI across command, control, and intelligence (C2) systems. Under Secretary of Defense for Research & Engineering Emil Michael—formerly Uber’s chief product officer—has championed a policy that any AI model used by the military must be available for “any lawful use.” The rationale is two‑fold:

- Operational advantage: Real‑time language understanding can streamline target identification, logistics, and decision‑making on the battlefield.

- Strategic parity: Rival nations are rapidly fielding AI‑enhanced weapons; the U.S. seeks to avoid a capability gap.

To operationalize this vision, the DoD has been renegotiating existing contracts with leading AI labs, demanding that companies remove “guardrails” that currently prevent uses such as mass data collection on civilians or fully autonomous kill‑chains.

Anthropic’s Ethical Red Lines

Anthropic, valued at roughly $380 billion, has built its reputation on “constitutional AI”—a set of internal principles that prohibit the deployment of its models for surveillance of U.S. persons and for weapons that can select and engage targets without human oversight. In recent negotiations, the Pentagon asked Anthropic to replace these safeguards with a blanket “any lawful use” clause. Anthropic’s response can be broken down into three core arguments:

- Legal liability: “Any lawful use” could be interpreted to include activities that conflict with existing privacy statutes, exposing the company to lawsuits.

- Moral responsibility:

- Anthropic’s leadership believes that AI developers must retain “human‑in‑the‑loop” control over lethal decisions, a stance echoed by many AI ethicists.

CEO Dario Amodei told reporters, “We cannot in good conscience accede to a request that would enable unsupervised killer robots or mass surveillance of our own citizens.” The company has also warned that being labeled a “supply‑chain risk” could jeopardize its ability to secure future contracts, not only with the DoD but also with allied governments that reference U.S. procurement standards.

How Competitors Are Responding

While Anthropic holds firm, rivals OpenAI and xAI have reportedly agreed to the Pentagon’s terms, at least in draft form. OpenAI’s OpenAI ChatGPT integration roadmap now includes a “military‑use” toggle that can be disabled by the client, effectively handing the DoD the ability to lift restrictions on demand. xAI’s CEO has publicly stated that “national security takes precedence over optional guardrails,” a sentiment that has drawn criticism from employee groups.

Industry insiders note a growing split: companies that prioritize rapid revenue growth from defense contracts are more willing to compromise on ethical safeguards, while those that market themselves as “responsible AI” are facing pressure to maintain their stance. A senior engineer at Amazon Web Services, speaking on condition of anonymity, said, “When I joined the tech industry, I thought tech was about making people’s lives easier. Now it feels like we’re building tools for surveillance and deportation.”

Ethical, Legal, and Strategic Implications

The Anthropic‑Pentagon dispute is more than a contract negotiation; it is a flashpoint for the emerging field of AI ethics in defense. Several key implications deserve attention:

1. Precedent for “Any Lawful Use” Clauses

If the Pentagon succeeds, future contracts across the tech sector may adopt similar language, effectively eroding corporate‑level ethical safeguards. This could lead to a “race to the bottom” where companies feel compelled to abandon internal principles to stay competitive.

2. Autonomous Weapon Proliferation

Unrestricted AI models could be integrated into weapon systems that autonomously select and engage targets. International law currently lacks clear definitions for “lethal autonomous weapons,” and the U.S. has not signed the UN’s About UBOS‑style statements calling for a ban. The Pentagon’s push may accelerate global proliferation, prompting other nations to follow suit.

3. Mass Surveillance Risks

Allowing AI models to process real‑time video, audio, and metadata from civilian sources could create a de‑facto domestic surveillance network. Civil liberties groups warn that such capabilities could be used to monitor protests, political dissent, or minority communities without judicial oversight.

4. Corporate Reputation and Talent Retention

Companies that appear to compromise on ethics risk losing top talent. Recent surveys show that 68 % of AI researchers consider ethical alignment a primary factor when choosing employers. Anthropic’s stance may attract talent seeking a “values‑first” workplace, while OpenAI and xAI could face internal pushback.

For organizations looking to navigate these complexities, tools like the AI ethics framework offered by UBOS can help assess risk, implement guardrails, and document compliance.

Original Reporting and Key Quote

The dispute was first detailed by The Verge on February 27, 2026. Stevie Bonifield wrote, “Threats do not change our position: we cannot in good conscience accede to their request.” This quote encapsulates Anthropic’s unwavering commitment to its ethical boundaries.

Looking Ahead: What Comes Next?

As the deadline looms, the Pentagon may either label Anthropic a “supply‑chain risk” or seek legislative authority to compel compliance. Meanwhile, Congress is expected to hold hearings on AI in defense, potentially resulting in new regulations that could codify “human‑in‑the‑loop” requirements for lethal systems.

For the broader AI community, the outcome will signal whether ethical self‑regulation can survive pressure from powerful government customers. If Anthropic’s stance holds, it could inspire a coalition of firms to demand clearer legal protections for AI guardrails. Conversely, a capitulation could normalize “any lawful use” clauses, reshaping the AI‑defense landscape for years to come.

Stakeholders—policy makers, technologists, and the public—should monitor upcoming developments, participate in public comment periods, and leverage platforms like the UBOS partner program to build responsible AI solutions that balance national security with fundamental rights.

Explore how you can build ethical AI applications with UBOS:

- Workflow automation studio – design safe data pipelines.

- Web app editor on UBOS – prototype responsible AI tools.

- UBOS templates for quick start – jump‑start compliance checks.

- UBOS pricing plans – choose a plan that fits ethical AI budgets.