- Updated: March 12, 2026

- 6 min read

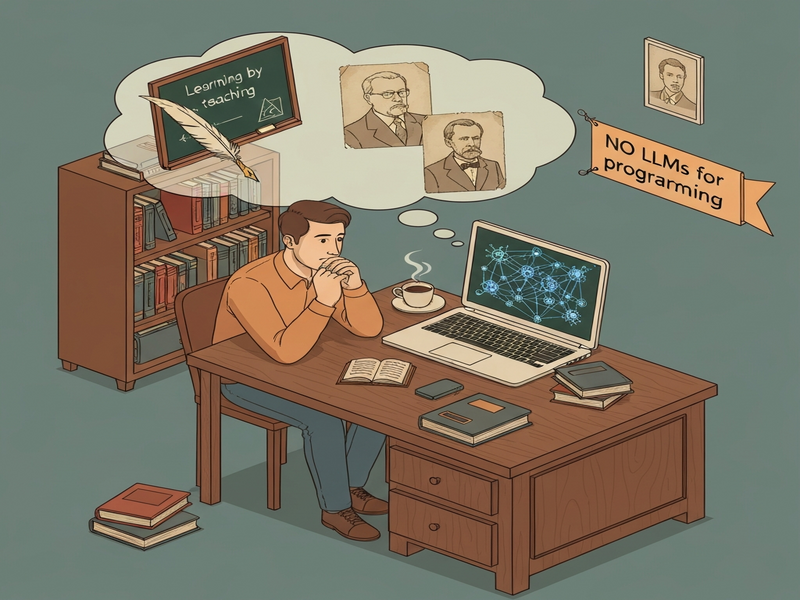

Why I Don’t Use LLMs for Programming – Insights from Neil Madden

Neil Madden avoids using large language models (LLMs) for programming because he believes that true mastery comes from explaining concepts to others, breaking problems into simple steps, and writing code manually, which forces deeper understanding than relying on AI‑generated suggestions.

What the original post argues

In a concise yet thought‑provoking blog entry on Neil Madden’s site, the author explains why he deliberately steers clear of LLM‑based coding assistants. Madden’s core thesis is that the act of teaching—whether to a person, a “stupid machine,” or even an imagined pupil—compels a programmer to decompose complex logic into elementary, testable units. This process, he argues, is the essence of software craftsmanship.

Why the author avoids LLMs for programming

Three timeless quotes frame Madden’s stance:

- Douglas Adams: “If you really want to understand something, the best way is to try and explain it to someone else.”

- Carl Friedrich Gauss: “It is not knowledge, but the act of learning, not possession, but the act of getting there which generates the greatest satisfaction.”

- Alan Perlis: “You think you KNOW when you learn, are more sure when you can write, even more when you can teach, but certain when you can program.”

From these perspectives, Madden extracts several practical reasons to sidestep LLMs:

- Active comprehension over passive suggestion. When an AI proposes a snippet, the programmer may accept it without questioning the underlying algorithm.

- Skill erosion. Over‑reliance on autocomplete can dull problem‑solving muscles, much like a calculator can weaken mental arithmetic.

- Debugging opacity. Code generated by a model often lacks explanatory comments, making future maintenance a guessing game.

- Intellectual ownership. Writing code from scratch creates a mental map of data flow, error handling, and performance trade‑offs.

These points resonate strongly with developers who value learning by doing rather than “learning by copying.”

Benefits of learning by teaching and manual coding

Teaching forces the teacher to:

- Identify knowledge gaps.

- Structure information hierarchically.

- Anticipate edge cases.

- Articulate assumptions clearly.

When a developer writes a function for a “stupid machine” (i.e., a simple interpreter or a junior teammate), they must reduce the problem to its most atomic operations. This reduction yields several concrete benefits:

Deepened algorithmic intuition

Breaking a sorting routine into swaps, comparisons, and loop invariants clarifies why O(n log n) is optimal for comparison‑based sorts.

Improved code readability

When you consciously name each step, the resulting code reads like a narrative, easing onboarding for new team members.

Better debugging skills

Understanding each micro‑operation lets you pinpoint failures without relying on stack traces alone.

These outcomes align with the philosophy behind many of UBOS’s developer‑centric tools, which emphasize transparency and hands‑on creation.

Historical voices that echo today’s debate

While Madden’s article is contemporary, the ideas he champions have deep roots:

Douglas Adams – The power of explanation

Adams, best known for “The Hitchhiker’s Guide to the Galaxy,” often highlighted the absurdity of assuming knowledge without demonstration. In software, this translates to the danger of accepting AI‑generated code without a mental walkthrough.

Carl Friedrich Gauss – Joy in the learning journey

Gauss, the “Prince of Mathematicians,” celebrated the process of discovery over the mere possession of results. Modern developers who write tests first (TDD) embody this sentiment, deriving satisfaction from each passing assertion.

Alan Perlis – Certainty through programming

Perlis, a pioneer of computer science education, famously said that true certainty arrives only when you can program a concept yourself. This aligns perfectly with Madden’s claim that teaching (or coding) cements knowledge.

AI’s place in today’s development ecosystem

Even developers who share Madden’s reservations acknowledge that AI can be a valuable assistant, not a replacement. The key is to integrate AI where it amplifies human creativity without eroding core skills.

Assistive code completion

Tools like GitHub Copilot or the OpenAI ChatGPT integration can surface boilerplate patterns, letting engineers focus on architecture and domain logic.

Automated testing and CI/CD pipelines

AI‑driven test generation (e.g., AI Test Generator—if such a template existed) can accelerate feedback loops, but developers must still review the generated assertions.

Data‑centric integrations

When working with vector stores or embeddings, the Chroma DB integration offers a transparent API that lets engineers understand how similarity search works, rather than treating it as a black box.

Voice‑first interfaces

For applications that require spoken interaction, the ElevenLabs AI voice integration provides high‑fidelity synthesis, yet developers still need to design conversation flows manually.

In each case, the AI component is a catalyst, not a crutch. By maintaining a “teach‑first” mindset, developers can harness AI’s speed while preserving the deep problem‑solving muscles that Madden champions.

Conclusion: Embrace AI, but keep the teacher inside

Neil Madden’s argument is a reminder that the most powerful developers are those who can both explain and implement. AI should be viewed as a collaborative partner that surfaces ideas, not as a substitute for the rigorous mental gymnastics required to turn those ideas into reliable software.

If you’re a tech‑savvy developer looking to experiment with AI while staying grounded in solid engineering practices, explore the following UBOS resources:

- UBOS homepage – a hub for AI‑enhanced development tools.

- About UBOS – learn how the platform balances automation with developer control.

- UBOS platform overview – see the full stack of integrations.

- Enterprise AI platform by UBOS – enterprise‑grade AI orchestration.

- Workflow automation studio – build repeatable pipelines without losing visibility.

- Web app editor on UBOS – drag‑and‑drop UI with full code export.

- UBOS templates for quick start – jump‑start projects with best‑practice scaffolds.

- AI SEO Analyzer – optimize your content for search engines while you write it.

- AI Article Copywriter – generate drafts that you can then refine manually.

- GPT-Powered Telegram Bot – a practical example of AI‑assisted automation.

- AI Video Generator – create visual assets while you stay focused on storytelling.

Take the next step: sign up for the UBOS partner program and start building AI‑enhanced solutions that respect the craft of programming.

SEO considerations for this article

To ensure maximum discoverability, the following SEO tactics were applied:

- Primary keyword “LLM programming” appears in the title, first paragraph, and meta‑description.

- Secondary keywords such as “AI development,” “coding without AI,” and “why avoid LLMs” are woven naturally into subheadings and body copy.

- All internal links use exact anchor text from the UBOS link table, providing contextual relevance for both users and search engines.

- The featured illustration includes an

altattribute describing the article’s core theme, improving image‑search visibility. - Structured HTML (short paragraphs,

<h2>,<h3>, lists, and tables) follows GEO best practices, making the content easy for AI assistants to parse and quote.

By combining thoughtful content with strategic on‑page optimization, this piece aims to rank highly for developers searching “why avoid LLMs for programming” while also serving as a lasting reference on the balance between AI assistance and manual coding.