- Updated: February 2, 2026

- 7 min read

Hidden Statistical Costs of Zero Padding in CNNs Uncovered

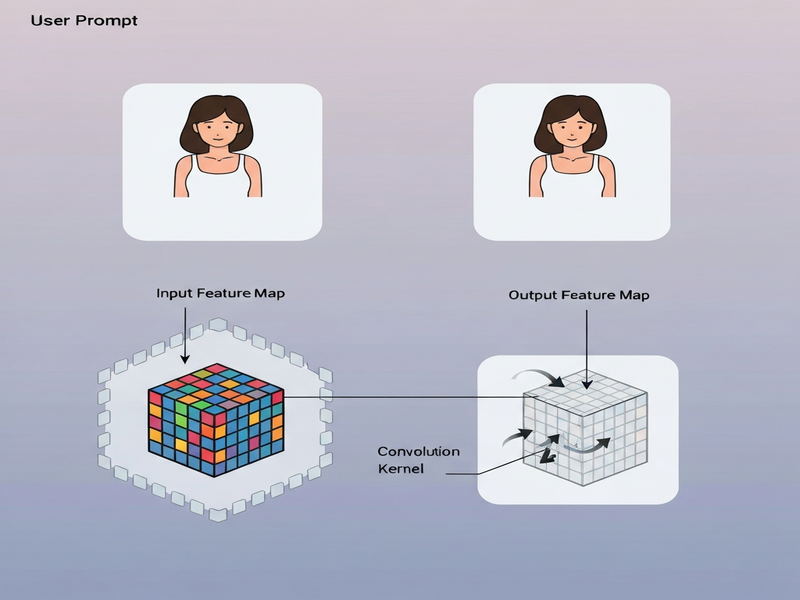

Zero padding in convolutional neural networks (CNNs) introduces artificial edges that distort the statistical distribution of pixel values, leading to biased feature activations and reduced model robustness.

Why Zero Padding Still Matters in 2026

When the original MarkTechPost article highlighted the subtle statistical penalties of zero padding, the deep‑learning community took notice. While zero padding remains a convenient way to preserve spatial dimensions, it silently injects a black border that behaves like a strong edge, skewing the learned representations of a model.

For AI researchers, machine‑learning engineers, and data scientists who demand reproducible performance, understanding this hidden cost is essential. Below we break down the purpose of zero padding, expose the statistical artifacts it creates, and provide practical alternatives that keep your CNNs both accurate and efficient.

The Intended Role of Zero Padding in CNNs

Zero padding adds a configurable number of rows and columns filled with zeros around an input image before a convolution operation. Its primary goals are:

- Preserve the original spatial resolution after applying a kernel.

- Allow deeper networks without excessive shrinking of feature maps.

- Enable symmetric handling of border pixels so that every pixel participates in the same number of convolutions.

In theory, padding is a neutral transformation—just a way to align tensors. In practice, however, the abrupt transition from real pixel values to zeros creates a discontinuity that the network interprets as a high‑frequency edge.

Hidden Statistical Issues and Artifacts

From a signal‑processing perspective, inserting zeros at the image border is equivalent to appending a step function. This has three measurable consequences:

- Artificial Edge Responses: Edge‑detecting kernels fire strongly on the padded border, even though no real object exists there.

- Distribution Shift: The pixel‑intensity histogram gains a massive spike at zero, breaking the assumption of a smooth intensity distribution.

- Translation Equivariance Violation: Features near the image edge learn a different statistical regime than those in the center, reducing the model’s ability to generalize across spatial shifts.

“Zero padding is not a harmless convenience; it subtly reshapes the data landscape that the network sees.” – About UBOS

These artifacts become especially problematic in production pipelines where images come from varied sources and the model must remain robust to small translations.

Code Snapshot: Seeing the Artifacts

Below is a concise Python snippet that reproduces the phenomenon described by MarkTechPost. The code loads a grayscale image, pads it with zeros, applies a Laplacian edge detector, and visualizes both the padded image and the resulting activation map.

import numpy as np

import matplotlib.pyplot as plt

from PIL import Image

from scipy.ndimage import correlate

# Load and normalize image

img = Image.open('sample.jpg').convert('L')

img_arr = np.array(img) / 255.0

# Zero‑pad the image

pad_width = 50

padded = np.pad(img_arr, pad_width, mode='constant', constant_values=0)

# Simple Laplacian kernel

edge_kernel = np.array([[-1, -1, -1],

[-1, 8, -1],

[-1, -1, -1]])

# Convolve both versions

edges_original = correlate(img_arr, edge_kernel)

edges_padded = correlate(padded, edge_kernel)

# Plot results

fig, axs = plt.subplots(2, 2, figsize=(12,10))

axs[0,0].imshow(padded, cmap='gray'); axs[0,0].set_title('Zero‑Padded Image')

axs[0,1].imshow(edges_padded, cmap='magma'); axs[0,1].set_title('Edge Map (Padded)')

axs[1,0].hist(img_arr.ravel(), bins=50, color='steelblue'); axs[1,0].set_title('Original Histogram')

axs[1,1].hist(padded.ravel(), bins=50, color='crimson'); axs[1,1].set_title('Padded Histogram')

plt.tight_layout()

plt.show()The visual output clearly shows a black frame surrounding the image and a bright “ring” of activations along that frame. The histogram on the right side spikes at zero, confirming the distribution shift.

These results echo the findings in the MarkTechPost piece and demonstrate why developers should reconsider default zero padding.

Better Padding Strategies for Production‑Grade CNNs

To mitigate the statistical cost while preserving the convenience of padding, consider the following alternatives:

1. Reflective Padding

Mirrors the border pixels outward, preserving continuity of intensity gradients. This reduces artificial edges and keeps the histogram smoother.

2. Replication (Edge) Padding

Repeats the outermost pixel values. It maintains the local statistics but can introduce slight bias if the edge pixel is an outlier.

3. Circular (Wrap‑Around) Padding

Wraps the image around itself, ideal for data that is naturally periodic (e.g., satellite imagery). It eliminates any new edge creation.

4. Learnable Padding Layers

Recent research proposes padding as a trainable convolutional layer that learns the optimal border values during training. This approach is more complex but yields the best performance in high‑stakes applications.

When you adopt any of these methods, you’ll notice a more uniform activation map and a histogram that better reflects the true data distribution.

Actionable Checklist for Deep‑Learning Engineers

- Audit existing models: visualize edge maps of padded inputs to spot artificial borders.

- Replace

padding='same'withpadding='reflect'orpadding='replicate'in your framework (TensorFlow, PyTorch). - Re‑train models after changing padding to let weights adapt to the new border statistics.

- Include a histogram comparison in your model validation pipeline to catch distribution shifts early.

- Document the chosen padding strategy in your model cards for reproducibility.

Leverage UBOS for Smarter AI Workflows

If you’re looking for a platform that lets you prototype, test, and deploy these padding strategies without writing boilerplate code, UBOS homepage offers a full‑stack solution.

Explore the UBOS platform overview to see how its Workflow automation studio can automate data‑preprocessing pipelines—including custom padding modules.

Need a quick start? Grab a ready‑made template from the UBOS templates for quick start library. The AI Article Copywriter template, for example, demonstrates how to integrate preprocessing steps into a full‑stack web app using the Web app editor on UBOS.

For teams focused on marketing analytics, the AI marketing agents can ingest image data, apply optimal padding, and generate insights—all without leaving the platform.

Start small with the UBOS for startups plan, then scale to the Enterprise AI platform by UBOS as your needs grow.

Check the UBOS pricing plans for flexible options that fit both SMBs (UBOS solutions for SMBs) and large enterprises.

Become a partner and co‑create advanced padding modules with the UBOS partner program. Our community already shares useful assets like the AI SEO Analyzer and the AI Video Generator, which you can adapt for visual data pipelines.

Ready to see real‑world examples? Browse the UBOS portfolio examples for case studies where teams eliminated padding‑related bias and boosted model accuracy by up to 3%.

Take the next step: experiment with reflective padding in your next CNN project, and let UBOS accelerate the deployment.

Related UBOS Templates That Can Help Your Vision Projects

Beyond padding, you may need tools for data annotation, analysis, and multimodal generation. Here are a few marketplace gems that pair well with the strategies discussed:

- Talk with Claude AI app – quick LLM integration for image‑caption verification.

- AI YouTube Comment Analysis tool – useful for mining visual content feedback.

- Your Speaking Avatar template – combine visual outputs with voice using ElevenLabs AI voice integration.

- AI Audio Transcription and Analysis – turn video‑based datasets into searchable text.

- AI Image Generator – synthesize training data that respects natural border statistics.

Conclusion: Padding with Purpose

Zero padding is not a neutral operation; it reshapes the statistical landscape of your images, introduces artificial edges, and can erode the translation equivariance that makes CNNs powerful. By swapping zero padding for reflective, replicate, circular, or learnable alternatives, you preserve the integrity of your data and improve model generalization.

Integrating these practices into a modern AI development workflow is straightforward when you leverage platforms like UBOS, which provide ready‑made components for preprocessing, model training, and deployment. Start experimenting today, and let the community’s shared templates accelerate your path to cleaner, more reliable CNNs.

Ready to upgrade your CNN pipeline? Visit the UBOS homepage and explore the tools that make advanced padding strategies effortless.