- Updated: March 18, 2026

- 6 min read

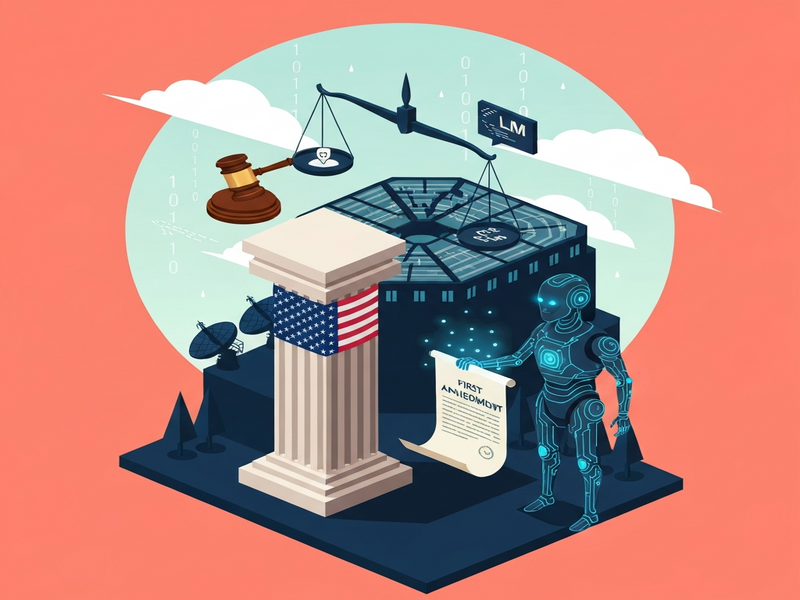

DoD Labels Anthropic as Supply‑Chain Risk in Legal Clash – Implications for AI in Defense

DoD Defends Supply‑Chain Risk Label in Anthropic Lawsuit

The U.S. Department of Defense, backed by the Department of Justice, has rejected Anthropic’s lawsuit, asserting that the “supply‑chain risk” designation is a lawful national‑security measure and not a violation of the company’s First Amendment rights.

Background on Anthropic’s Legal Challenge

In early 2024, Anthropic, the creator of the Claude family of large language models (LLMs), filed lawsuits in two federal courts alleging that the Pentagon’s decision to label the firm a “supply‑chain risk” unlawfully barred it from defense contracts. Anthropic argues that the label—issued under the Trump administration—exceeds statutory authority and amounts to retaliation for the company’s refusal to let the Department of Defense (DoD) use its models without strict ethical safeguards.

The core of Anthropic’s claim is that the designation threatens billions of dollars in projected revenue and violates the company’s First Amendment rights by imposing a de‑facto contract term on the government. The lawsuit seeks an injunction that would allow Anthropic to continue supplying its AI tools while the case proceeds.

For a broader view of how AI platforms can be leveraged across industries, see the UBOS platform overview.

DOJ and DoD Response: Legal and Security Rationale

On Tuesday, attorneys for the Department of Justice (DOJ) filed a brief in the San Francisco court, representing the DoD and several other federal agencies. The brief contended that Anthropic’s alleged “irreparable injury” is “legally insufficient” and that the government’s action is rooted in legitimate national‑security concerns, not punitive intent.

- Anthropic’s own “red‑line” policy—refusing to let its models be used for autonomous weapons or mass surveillance—prompted Defense Secretary Pete Hegseth to deem the company a potential insider threat.

- The brief warned that Anthropic could “sabotage, maliciously introduce unwanted function, or otherwise subvert the design, integrity, or operation of a national‑security system.”

- DoD officials emphasized that AI systems are “acutely vulnerable to manipulation,” and that allowing unrestricted access could jeopardize classified warfighting infrastructure.

The government also highlighted that the Pentagon is already transitioning to alternative AI providers—Google, OpenAI, and xAI—reducing reliance on Claude for critical missions such as Palantir‑driven data analysis.

Learn how Enterprise AI platform by UBOS helps organizations mitigate similar supply‑chain risks through modular, auditable AI components.

Implications for AI in Defense Systems

The DoD’s stance signals a broader shift toward “zero‑trust” AI procurement. Key implications include:

- Accelerated diversification: The Pentagon will fast‑track contracts with multiple vendors to avoid single‑point failures.

- Stricter ethical clauses: Future contracts will embed enforceable clauses that prevent AI from being used in lethal autonomous weapons without explicit congressional approval.

- Increased auditability: Agencies will demand provenance logs, model‑explainability, and real‑time tamper‑detection—features that many commercial LLMs currently lack.

- Supply‑chain transparency: Companies will need to disclose third‑party data sources and training pipelines to satisfy DoD risk assessments.

For startups navigating these new requirements, the UBOS for startups guide offers a practical checklist for building compliant AI services.

Quotes and Expert Commentary

“National‑security considerations must outweigh commercial interests when the technology can be weaponized,” said Dr. Maya Patel, senior fellow at the Center for AI & Defense. “The DoD’s approach, while aggressive, is a realistic response to the vulnerabilities inherent in today’s generative AI.”

“Anthropic’s red‑line policy is commendable, but it creates a governance paradox: the very safeguards they demand become the reason the government views them as a risk,” noted James Liu, AI policy analyst at the Brookings Institution.

Companies looking to embed AI responsibly can explore the AI marketing agents case study, which demonstrates how ethical guardrails are operationalized without sacrificing performance.

Why This Matters for AI Ethics, Defense, and the Future of LLMs

The clash between Anthropic and the DoD touches on several high‑impact keywords that define the current AI policy landscape: Anthropic, Claude, AI ethics, defense, warfighting systems, LLM, generative AI, DoD, DOJ lawsuit, AI governance. Understanding how these terms intersect helps journalists, analysts, and defense stakeholders anticipate regulatory trends.

For example, “generative AI” is now a buzzword in procurement documents, while “AI governance” has become a mandatory clause in most federal RFPs. The outcome of this lawsuit will likely set a precedent for how “supply‑chain risk” labels are applied to future AI vendors.

Further Reading

The original reporting on this legal battle was published by Wired. Their in‑depth analysis provides additional context on the political dynamics behind the DoD’s decision.

Related UBOS Resources

While the legal fight unfolds, organizations can start preparing with practical tools from UBOS:

- UBOS templates for quick start – pre‑built AI workflows that include compliance checkpoints.

- Workflow automation studio – drag‑and‑drop orchestration for secure model deployment.

- Web app editor on UBOS – build internal dashboards that log model usage in real time.

- UBOS pricing plans – transparent pricing for enterprises needing audit‑ready AI.

- UBOS portfolio examples – case studies of defense‑grade AI deployments.

Template Marketplace Highlights

UBOS’s marketplace offers ready‑made AI applications that can be repurposed for compliance‑focused projects:

- AI SEO Analyzer – ensures your AI‑generated content meets search‑engine guidelines.

- AI Article Copywriter – produces policy‑compliant documentation at scale.

- Talk with Claude AI app – a sandbox for testing Claude’s behavior under strict guardrails.

Conclusion & Call to Action

The Department of Defense’s refusal to back Anthropic’s lawsuit underscores a decisive moment for AI governance in national security. As the Pentagon pivots toward a multi‑vendor, zero‑trust AI ecosystem, companies that embed transparency, auditability, and ethical safeguards will be best positioned to win future contracts.

If your organization is evaluating AI for defense‑related workloads, start today by exploring the About UBOS page to understand how our platform can help you meet stringent security standards while accelerating innovation.

Stay informed, stay compliant, and let UBOS be your partner in building the next generation of trustworthy AI.