- Updated: February 4, 2026

- 6 min read

Conservative Q‑Learning Empowers Offline Training of Safety‑Critical Reinforcement Learning Agents

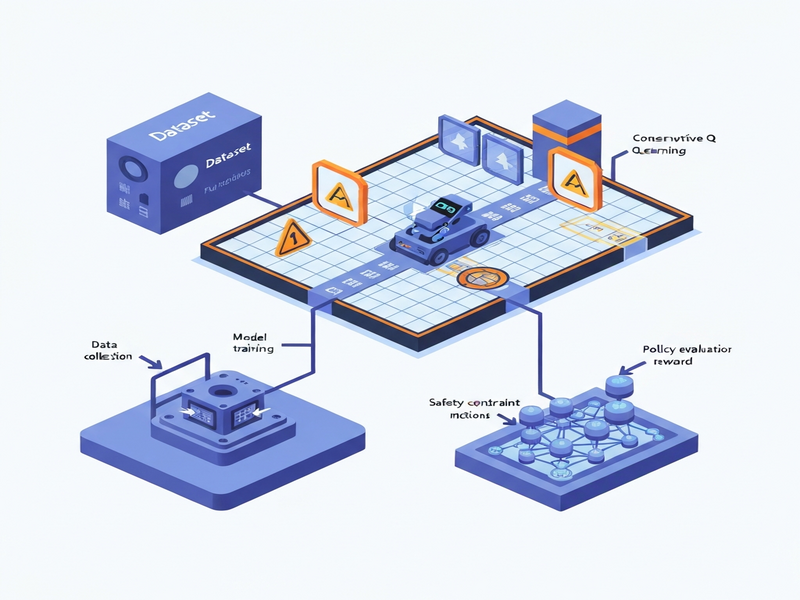

Conservative Q‑Learning (CQL) enables safety‑critical reinforcement learning agents to be trained entirely offline from fixed historical data, eliminating the need for risky real‑world exploration.

Why Offline Training Matters for Safety‑Critical RL

In domains such as autonomous robotics, medical decision support, and industrial control, a single unsafe action can cause catastrophic damage. Traditional online reinforcement learning (RL) relies on trial‑and‑error, which is unacceptable when safety is non‑negotiable. Offline RL—also called batch RL—solves this problem by learning solely from a pre‑collected dataset of safe trajectories. When combined with Conservative Q‑Learning, the resulting policies are deliberately pessimistic about unseen actions, dramatically reducing the chance of out‑of‑distribution (OOD) behavior.

In this article we walk through a complete RL tutorial that builds a safety‑critical GridWorld, generates a behavior dataset from a constrained policy, and trains both a Behavior Cloning baseline and a CQL agent using the d3rlpy library. The guide is written for AI researchers, machine‑learning engineers, and data scientists who need a reproducible pipeline that respects AI safety requirements.

Safety‑Critical Reinforcement Learning and Offline Training

Safety‑critical RL differs from conventional RL in three key dimensions:

- Risk‑averse objectives: The reward function heavily penalizes unsafe states.

- Deterministic evaluation: Policies are evaluated on a fixed test set before any deployment.

- Data‑centric development: All learning happens on fixed historical data, never on live interaction.

Offline training removes the exploration risk but introduces a new challenge: the dataset may not cover all relevant state‑action pairs. Naïve Q‑learning can overestimate values for unseen actions, leading to unsafe decisions when the policy is finally deployed. This is where Conservative Q‑Learning (CQL) shines.

For a deeper dive into how UBOS supports AI safety initiatives, explore the Enterprise AI platform by UBOS, which offers built‑in compliance checks and model‑governance tools.

Conservative Q‑Learning Meets d3rlpy

Conservative Q‑Learning adds a regularization term that penalizes Q‑values for actions not present in the dataset. The objective can be expressed as:

J(θ) = 𝔼_{(s,a,r,s')∈D}[ (Q_θ(s,a) - (r + γ·max_a' Q_θ(s',a')) )² ]

+ α·𝔼_{s∼D}[ log∑_a exp(Q_θ(s,a)) - 𝔼_{a∼π_D}[Q_θ(s,a)] ]Here, α controls the conservatism level; higher values push the policy toward actions observed in the dataset. The OpenAI ChatGPT integration can be used to generate natural‑language explanations of why a particular action was deemed unsafe, further enhancing transparency.

The d3rlpy library implements CQL out‑of‑the‑box, providing a clean Python API that works with both PyTorch and TensorFlow back‑ends. Its DiscreteCQL class lets you set the conservative_weight (the α term) directly, making hyper‑parameter tuning straightforward.

UBOS’s Workflow automation studio can orchestrate the entire training pipeline—from data ingestion to model versioning—without writing a single line of glue code.

Step‑by‑Step Offline RL Tutorial

1️⃣ Environment Setup

Begin by installing the required packages. The following command pulls the latest versions of d3rlpy, gymnasium, and supporting libraries:

pip install -U "d3rlpy" "gymnasium" "numpy" "torch" "matplotlib" "scikit-learn"Set a deterministic seed to guarantee reproducibility across runs. UBOS’s Web app editor on UBOS can store this script as a reusable component.

2️⃣ Generate a Safe Behavior Dataset

We define a custom SafetyCriticalGridWorld where hazards carry a -100 penalty and reaching the goal yields +50. A constrained policy—implemented as safe_behavior_policy—explores the grid with a small ε‑greedy noise, ensuring that only low‑risk trajectories are recorded.

Running generate_offline_episodes(env, n_episodes=500, epsilon=0.22) yields a collection of episodes that are later converted into a MDPDataset compatible with d3rlpy.

For visual learners, the UBOS templates for quick start include a pre‑built GridWorld visualizer that can be dropped into any notebook.

3️⃣ Train Behavior Cloning (BC) and Conservative Q‑Learning (CQL)

Two agents are trained on the same offline dataset:

- Behavior Cloning (BC): Simple supervised learning that mimics the dataset actions.

- Conservative Q‑Learning (CQL): Optimizes a Q‑function with a conservatism penalty (α = 6.0 in our example).

Both models are fit using fit() with a modest number of steps (25k for BC, 80k for CQL). The training logs are automatically stored in UBOS’s UBOS portfolio examples for later comparison.

4️⃣ Controlled Evaluation & Diagnostics

After training, we run a small set of rollouts (n_episodes=30) to compute:

- Mean return and standard deviation.

- Hazard encounter rate (safety metric).

- Goal‑reach rate (task success).

We also calculate an action‑mismatch rate that measures how often the learned policy selects actions not present in the offline data. CQL typically shows a lower mismatch, confirming its conservative bias.

All evaluation plots are rendered with matplotlib and can be embedded directly into a UBOS dashboard via the AI marketing agents visual component.

5️⃣ Save and Deploy the Policies

Both agents are serialized to .pt files and uploaded to UBOS’s model registry. From there, they can be served as REST endpoints or wrapped into a Telegram integration on UBOS for real‑time interaction.

For example, the GPT‑Powered Telegram Bot template can be repurposed to expose the CQL policy to field operators, delivering safe action recommendations on demand.

Why Choose Conservative Q‑Learning for Safety‑Critical Applications?

Below are the most compelling advantages, illustrated with real‑world scenarios:

| Benefit | Typical Use‑Case |

|---|---|

| Reduced OOD actions | Autonomous drones navigating hazardous zones |

| Data‑efficient learning | Medical treatment recommendation from historic patient records |

| Regulatory compliance | Industrial control systems requiring audit trails |

| Fast prototyping | Start‑up robotics platforms using UBOS for startups to spin up RL agents in days instead of months |

Companies in finance have already leveraged CQL to train trading bots that never place orders outside historically observed risk limits. In healthcare, offline RL with CQL helps design dosage policies that respect patient safety constraints while still improving outcomes.

UBOS’s UBOS solutions for SMBs include pre‑packaged CQL pipelines, making it easy for smaller teams to adopt AI safety best practices without building infrastructure from scratch.

Take the Next Step with UBOS

Training safety‑critical reinforcement learning agents offline is no longer a research‑only activity. With Conservative Q‑Learning and the flexible d3rlpy library, you can turn static logs into robust, low‑risk policies in a matter of hours.

Ready to accelerate your AI safety projects?

- Explore the UBOS platform overview for end‑to‑end model management.

- Check out the UBOS pricing plans to find a tier that fits your team.

- Join the UBOS partner program and get early access to new safety‑centric modules.

For a hands‑on example, try the AI SEO Analyzer template—built with the same offline‑learning principles—to see how data‑driven AI can boost your digital presence while staying safe.

Stay updated with the latest breakthroughs in offline RL, AI safety, and UBOS innovations by following our About UBOS page and subscribing to the newsletter.