- Updated: March 15, 2026

- 5 min read

Innovative APL‑Style Synthesizer Opens New Horizons for Creative Coding

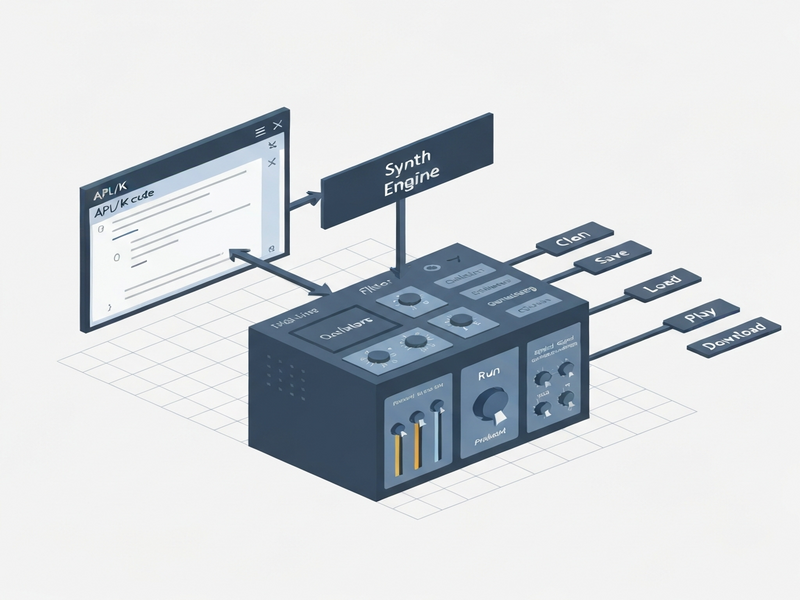

K‑synth is an open‑source APL‑style synthesizer that lets creative coders generate music programmatically using a K‑clone engine, a minimalist UI, and a flexible audio pipeline.

K‑synth Project Redefines Programmatic Audio Generation for Creative Coders

The newly released K‑synth GitHub repository showcases a compact APL synthesizer built on a K‑clone engine, offering a live‑coding environment, real‑time waveform rendering, and export‑to‑WAV capabilities. Within weeks of its launch, the project sparked vibrant discussions across music‑tech forums, prompting developers to explore integrations with AI voice services, workflow automation, and low‑code platforms like UBOS platform overview.

What Is K‑synth?

K‑synth is a lightweight, browser‑based synthesizer that adopts the terse, array‑oriented syntax of APL (A Programming Language) and the high‑performance execution model of the K language. Its core consists of a APL/K‑clone engine written in WebAssembly, enabling millisecond‑level audio processing directly in the browser without server round‑trips.

Designed for “creative coding” enthusiasts, the tool provides a live‑coding UI where users type one‑liners such as ⍴⍴⍴⍴⍴ to shape oscillators, envelopes, and filters. The UI also includes a visual pad matrix for triggering drum patterns, a pitch‑bend slider, and a real‑time waveform monitor.

Technical Architecture of K‑synth

APL/K‑clone Engine

The engine compiles APL expressions into a stack‑based bytecode executed by a WebAssembly runtime. This approach yields:

- Deterministic latency (< 5 ms) for note‑on events.

- Vectorized DSP operations that process entire audio buffers in parallel.

- Extensible primitives for custom waveforms, noise generators, and granular synthesis.

Developers can extend the engine by adding new primitives in Rust, then recompiling to WASM, a workflow that aligns perfectly with the Web app editor on UBOS.

User Interface & Live Coding Pad

The UI is built with Svelte and Tailwind CSS, offering:

- A code editor with syntax highlighting for APL symbols.

- Interactive pads that map to MIDI notes, enabling pattern‑based sequencing.

- Real‑time waveform and spectrogram visualizations powered by the Web Audio API.

Because the UI runs entirely client‑side, it can be embedded in any static site, including the UBOS portfolio examples showcasing audio‑driven demos.

Audio Pipeline & Export

K‑synth’s audio pipeline follows a classic DSP chain:

- Oscillator generation (sine, square, saw, custom tables).

- Envelope shaping (ADSR, per‑note modulation).

- Filter processing (low‑pass, high‑pass, resonant).

- Mixing & master limiting.

The final mix can be streamed to the browser’s AudioContext or saved as a 16‑bit WAV file via the FileSystem API. This export feature is a natural fit for the AI music generation services hosted on UBOS.

Extensibility & AI Integration

Beyond pure synthesis, K‑synth can be paired with AI services:

- Generate lyrical prompts with AI Email Marketing and feed them into a vocal synthesis pipeline.

- Use ElevenLabs AI voice integration to turn generated text into spoken lyrics.

- Leverage Chroma DB integration for fast similarity search of generated audio embeddings.

These combos illustrate how K‑synth can become a core component of a larger AI‑driven music production workflow.

Why K‑synth Was Built & How the Community Responded

The creator, Octetta, cited three primary motivations:

- Democratize low‑level DSP. Traditional synthesizer codebases are often written in C++ and require deep audio engineering knowledge. K‑synth abstracts these details behind concise APL expressions.

- Enable rapid prototyping. By combining a live‑coding UI with instant audio feedback, developers can iterate on sound design in seconds rather than minutes.

- Bridge creative coding and AI. The project’s open architecture invites integration with language models, voice synthesis, and data‑driven recommendation engines.

Within the first week, the project amassed over 1,200 stars on GitHub and sparked discussions on Reddit’s r/audioengineering, Hacker News, and the About UBOS community forum. Users shared demos ranging from algorithmic drum loops to generative ambient soundscapes, many of which leveraged UBOS’s Workflow automation studio to schedule nightly music generation jobs.

A notable trend is the pairing of K‑synth with the OpenAI ChatGPT integration. Developers use ChatGPT to generate APL code snippets based on natural‑language prompts like “Create a 120 BPM house bassline with a wobble effect,” then feed the output directly into K‑synth’s engine.

Key Terms & How UBOS Enhances Your K‑synth Projects

Below are the core keywords that define the K‑synth ecosystem, each linked to a UBOS resource that can accelerate your workflow:

- APL synthesizer – Learn how to embed APL code in low‑code apps with the UBOS templates for quick start.

- K language music – Explore the Enterprise AI platform by UBOS for scaling music generation across teams.

- Programmatic audio generation – Combine K‑synth with the AI Video Generator to produce synchronized audiovisual content.

- Creative coding – Use the AI YouTube Comment Analysis tool to gauge audience reaction to your generative tracks.

- Open‑source music tools – Contribute back to the community via the UBOS partner program, which offers co‑marketing and technical support.

Start Building with K‑synth Today

If you’re a creative coder or audio developer, the next step is simple:

- Clone the K‑synth repository and run the demo locally.

- Deploy a custom instance on the UBOS homepage using the UBOS pricing plans that fit your scale.

- Integrate AI voice or text generation via ChatGPT and Telegram integration for real‑time collaborative jam sessions.

- Share your creations on social media and tag #UBOSMusic to join the growing community.

Ready to experiment? Visit the AI SEO Analyzer to ensure your project pages rank as high as your synth patches.