- Updated: January 31, 2026

- 7 min read

Rust Rayon Mutex Deadlock Explained – Preventing Robot Freeze

Answer: A Rayon mutex deadlock in Rust happens when a thread locks a std::sync::Mutex and, while still holding that lock, calls a function that internally uses Rayon’s work‑stealing thread pool; the Rayon workers then try to acquire the same mutex, causing a circular wait and freezing the program.

Why a 2 AM Robot Freeze Became a Rust Concurrency Lesson

At 2 a.m., a developer watching an autonomous sidewalk robot saw the control loop stall without a crash or error. After eight hours of debugging, the culprit turned out to be a subtle deadlock between a Mutex and the Rayon library. The full story is documented in the original post by Campedersen, and it offers a perfect case study for anyone building high‑frequency, multithreaded Rust applications.

What Went Wrong: The Original Rayon Mutex Deadlock

The robot’s control loop runs at 100 Hz, reading sensors, performing calculations, and sending motor commands every 10 ms. When a LiDAR stream was added via WebRTC, the loop would freeze after roughly 16 seconds of client connection. The watchdog detected the stall, but no panic or error was raised—just a silent deadlock.

Key observations from the original debugging session:

- Switching between

tokioandstd::thread::sleepmade no difference. - Replacing the async mutex with

std::sync::Mutexdidn’t solve the problem. - Even isolating the loop in its own OS thread still resulted in the same freeze.

The breakthrough came from a heartbeat thread that logged the iteration counter every five seconds. When the loop stopped, the heartbeat reported “STUCK at iteration 1615”, confirming a true deadlock rather than a performance bottleneck.

Understanding Mutexes and Rayon’s Work‑Stealing Model

To grasp why the deadlock occurred, we need to dissect two core Rust concurrency primitives: the Mutex and Rayon’s thread pool.

How std::sync::Mutex Works

A Mutex provides exclusive access to shared data. When a thread calls lock(), it either acquires the lock immediately or blocks until the lock becomes available. The lock is released automatically when the guard goes out of scope. In a single‑threaded context this is straightforward, but in a multithreaded environment the order of lock acquisition becomes critical.

Rayon’s Work‑Stealing Thread Pool

Rayon is a data‑parallelism library that creates a pool of worker threads. When you invoke rayon::join or par_iter, the work is split into tasks that are dynamically scheduled across the pool. Workers can “steal” tasks from each other to keep all cores busy, which dramatically improves throughput for CPU‑bound workloads.

The Circular Wait That Triggers a Deadlock

In the robot example, the main control loop held a Mutex protecting shared state (e.g., sensor buffers). While still holding that lock, it called recorder.log_lidar_scan(), a function from the ChatGPT and Telegram integration library that internally uses Rayon for parallel logging.

Rayon’s workers, now part of the same process, attempted to acquire the same Mutex to write log entries. Because the original thread never released the lock (it was waiting for the logging call to return), the workers blocked, and the main thread waited for the workers to finish—creating a classic circular wait condition.

Why This Is a Known Rayon Foot‑Gun

Rayon’s documentation (issue #592) warns against calling Rayon from inside a lock. The library assumes that tasks can run concurrently without holding external locks. Violating this assumption leads to the exact deadlock pattern observed.

How to Fix the Deadlock and Prevent Future Ones

The fix is surprisingly small but powerful: avoid holding a Mutex while invoking Rayon‑based code. Below are concrete steps and best‑practice recommendations for Rust developers working with multithreading and third‑party libraries.

Step‑by‑Step Remedy

- Identify Critical Sections: Pinpoint every place where a

Mutexis locked. - Minimize Lock Scope: Move expensive or blocking calls (e.g., I/O, logging, network requests) outside the lock’s scope.

- Separate Concerns: If a library uses Rayon internally, call it before acquiring your own lock, or clone the data you need and release the lock first.

- Use Try‑Lock for Diagnostics: Replace

lock()withtry_lock()during debugging to detect potential contention early. - Instrument Heartbeat Monitors: As demonstrated, a lightweight watchdog thread can surface stalls instantly.

- Audit Dependencies: Review

Cargo.lockfor indirect dependencies that may spawn their own thread pools (e.g., Rayon, Tokio, GStreamer).

Applying these steps to the robot code changed the snippet from:

let mut state = shared.lock().unwrap();

// … do work …

if let Some(scan) = &*lidar_rx.borrow() {

recorder.log_lidar_scan(scan); // ❌ deadlock

}

to the safe version:

let scan_opt = {

let _guard = shared.lock().unwrap();

// Extract data while holding the lock

lidar_rx.borrow().clone()

};

if let Some(scan) = scan_opt {

recorder.log_lidar_scan(scan); // ✅ no lock held

}

Now the logging runs in Rayon’s pool without any lock contention, and the control loop resumes its 100 Hz rhythm.

Additional Best Practices for Rust Concurrency

- Prefer

RwLockfor Read‑Heavy Scenarios: Allows multiple readers while still protecting writes. - Leverage

crossbeamChannels: They provide lock‑free communication between threads. - Use

parking_lotfor Faster Mutexes: It offers a more ergonomic API and lower overhead. - Run Static Analysis Tools: Rayon library resources and Clippy can flag common concurrency pitfalls.

- Document Thread‑Pool Usage: Clearly annotate which crates spawn their own pools to avoid accidental nesting.

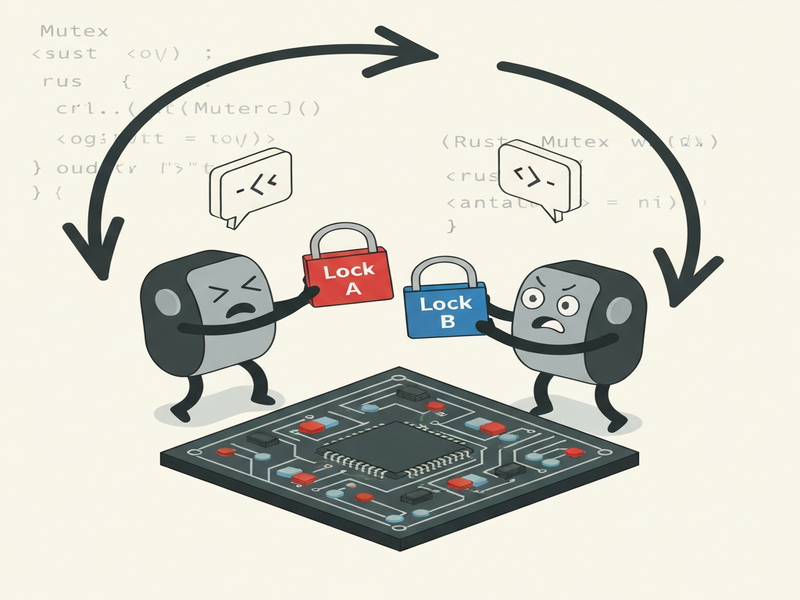

Visualizing the Deadlock Scenario

How UBOS Helps You Avoid Similar Pitfalls

When building AI‑enhanced services, the UBOS platform overview provides built‑in observability and workflow orchestration that surface lock contention before it becomes a production issue. For startups looking for rapid prototyping, the UBOS for startups program includes pre‑configured templates such as the AI SEO Analyzer and AI Article Copywriter, which demonstrate safe concurrency patterns out of the box.

For SMBs, the UBOS solutions for SMBs include a Workflow automation studio that lets you visually map critical sections and automatically inject heartbeat monitors similar to the one used in the robot case study.

Enterprises can benefit from the Enterprise AI platform by UBOS, which integrates with OpenAI ChatGPT integration and Chroma DB integration to provide real‑time analytics on thread‑pool health, lock wait times, and resource utilization.

If you need to expose your Rust services via messaging platforms, the Telegram integration on UBOS offers a secure bridge that respects Rust’s ownership model, preventing accidental cross‑thread data sharing that could re‑introduce deadlocks.

Developers looking to add voice capabilities can explore the ElevenLabs AI voice integration, which runs in its own isolated thread pool, demonstrating best‑in‑class separation of concerns.

Key Takeaways for Rust Developers

Understanding the interaction between Mutexes and Rayon’s work‑stealing scheduler is essential for any high‑performance Rust application. The main lessons from the deadlock incident are:

- Never hold a lock while invoking code that may spawn its own thread pool.

- Keep critical sections as short as possible and release locks before expensive operations.

- Instrument your system with lightweight watchdogs to detect stalls early.

- Audit third‑party crates for hidden concurrency models.

- Leverage platforms like UBOS to gain visibility into thread‑pool health and lock contention.

By applying these practices, you can avoid the frustrating “robot freezes at iteration 1615” scenario and keep your Rust services running smoothly at scale.

Ready to Strengthen Your Rust Concurrency?

If you’re building a mission‑critical system and want built‑in safeguards against deadlocks, explore the UBOS pricing plans and start a free trial today. Our AI marketing agents can also help you promote your high‑performance services to the right audience.

Stay ahead of concurrency bugs—let UBOS handle the heavy lifting while you focus on delivering value.