- Updated: November 12, 2025

- 3 min read

Maya1: Open‑Source 3B Text‑to‑Speech Voice Model Runs on a Single GPU – A Generative AI Breakthrough

The Emergence of Maya1: Redefining Open Source Text-to-Speech Technology

In the ever-evolving landscape of artificial intelligence, the release of Maya1 marks a significant milestone. As an open-source, 3 billion parameter text-to-speech (TTS) model, Maya1 offers a transformative approach to voice synthesis. By leveraging the power of a single GPU, this model delivers expressive, controllable speech that is both innovative and accessible.

Technical Overview of Maya1

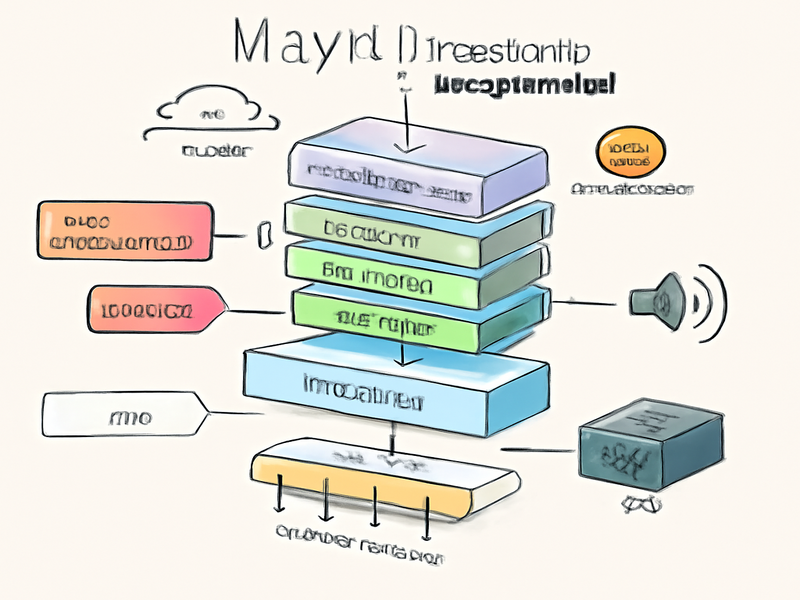

Maya1 is a state-of-the-art voice model designed to capture the nuances of human emotion and voice design. Its architecture is built upon a decoder-only transformer with a Llama-style backbone. Unlike traditional models that predict raw waveforms, Maya1 predicts tokens from a neural audio codec known as SNAC. This innovative approach allows for efficient generation and streaming of 24 kHz mono audio.

The model supports real-time streaming, making it ideal for applications such as interactive agents, games, podcasts, and live content. The integration of more than 20 inline emotion tags, such as , , and , provides users with the ability to fine-tune expressiveness and voice characteristics.

Training Data and Pipeline

Maya1’s training process combines extensive pretraining on an internet-scale English speech corpus with fine-tuning on a curated dataset of studio recordings. This dual approach ensures broad acoustic coverage and natural coarticulation. The model’s training pipeline includes:

- 24 kHz mono resampling with loudness normalization

- Voice activity detection with silence trimming

- Forced alignment using Montreal Forced Aligner

- Text and audio deduplication techniques

- SNAC encoding with efficient token packing

By adopting an XML-style attribute wrapper for voice descriptions, Maya1 allows developers to describe voices in free-form text, akin to briefing a voice actor. This flexibility enhances the model’s ability to generalize and produce high-quality speech.

Deployment and Inference

Deploying Maya1 is streamlined for efficiency. The reference implementation utilizes a single GPU with 16 GB or more of VRAM, such as an A100 or RTX 4090. The integration of the SNAC decoder facilitates real-time streaming with sub-100 millisecond latency targets.

Maya1’s deployment script, vllm_streaming_inference.py, supports Automatic Prefix Caching and multi-GPU scaling. Additionally, the model is available in GGUF quantized variants for lighter deployments, ensuring accessibility across various computational environments.

Key Takeaways

Maya1 sets a new standard for open-source text-to-speech models. Its ability to predict SNAC neural codec tokens, rather than raw waveforms, allows for efficient and expressive voice synthesis. The model’s design, which includes real-time streaming support and over 20 emotion tags, makes it a valuable tool for developers seeking to create dynamic audio experiences.

Furthermore, Maya1’s release under the Apache 2.0 license ensures that it remains accessible for both commercial and local use, fostering innovation and collaboration within the AI community.

Conclusion

The introduction of Maya1 represents a significant advancement in the field of text-to-speech technology. By providing a robust, open-source solution that combines expressive voice synthesis with efficient deployment, Maya1 empowers developers to explore new possibilities in AI-driven audio applications.

For those interested in integrating cutting-edge AI solutions into their projects, the OpenAI ChatGPT integration on the UBOS platform offers a comprehensive overview of how AI can enhance business operations. Additionally, the ChatGPT and Telegram integration provides insights into leveraging AI for seamless communication.

As the AI landscape continues to evolve, tools like Maya1 will undoubtedly play a crucial role in shaping the future of voice technology. By embracing open-source innovation, we can unlock the full potential of AI-driven solutions and create a more connected, expressive world.