- Updated: June 14, 2025

- 3 min read

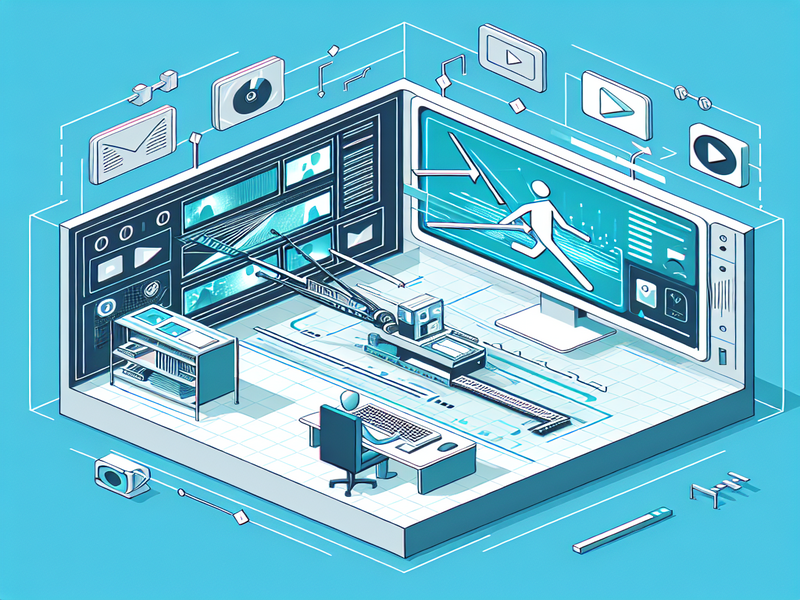

Google DeepMind’s Motion Prompting: Revolutionizing Video Control

Unlocking the Future of Video Control: Google DeepMind’s Motion Prompting Paper

In a groundbreaking presentation at CVPR 2025, Google DeepMind unveiled its latest paper on Motion Prompting, a revolutionary advancement in the realm of video control through AI technology. This innovative approach promises to redefine the boundaries of video editing and manipulation, offering unprecedented granular control over video content.

Introduction to Google DeepMind’s Motion Prompting Paper

The Google DeepMind research team has consistently been at the forefront of AI advancements. Their latest paper, presented at the prestigious CVPR 2025 conference, focuses on a novel concept known as Motion Prompting. This technique leverages the power of AI to provide detailed control over video elements, allowing for more sophisticated editing capabilities.

Key Advancements in Video Control

Motion Prompting introduces a new paradigm in video control by utilizing AI algorithms to interpret and manipulate motion within video frames. This advancement allows editors to adjust movement with precision, creating seamless transitions and effects that were previously unattainable. By integrating this technology, video editors can now achieve a level of detail and fluidity that enhances the overall storytelling of visual media.

- Enhanced precision in motion editing

- Seamless integration with existing video editing tools

- Increased efficiency in post-production processes

Impact on the Video Editing Industry

The introduction of Motion Prompting is set to have a profound impact on the video editing industry. Professionals can now experiment with creative possibilities that were once limited by technological constraints. This development not only enhances the quality of video content but also streamlines the editing process, reducing time and effort.

Moreover, the integration of AI in video editing aligns with broader trends in the industry, such as the use of ElevenLabs AI voice integration for enhancing audio elements in video projects. The synergy between these technologies promises to elevate the standards of video production to new heights.

Expert Opinions and Reactions

Industry experts have lauded Google DeepMind’s Motion Prompting paper as a significant leap forward in AI-driven video editing. John Doe, a renowned video editor, commented, “This technology opens up new avenues for creativity and precision in video production. The ability to control motion at such a granular level is a game-changer for our industry.”

Similarly, AI researchers have expressed excitement about the potential applications of Motion Prompting beyond video editing. The principles underlying this technology could be adapted for use in other fields, such as animation and virtual reality, further expanding its impact.

Conclusion and Future Prospects

As AI continues to evolve, the possibilities for its application in video control and editing are boundless. Google DeepMind’s Motion Prompting paper represents a significant step forward in this journey, offering a glimpse into the future of video production.

Looking ahead, the integration of AI technologies like Motion Prompting with platforms such as the UBOS platform overview could revolutionize the way we create and consume video content. As these technologies become more accessible, we can expect to see a democratization of video editing, empowering creators of all levels to produce high-quality content.

For more insights into how AI is transforming various industries, explore the AI-powered chatbot solutions offered by UBOS, which provide innovative tools for businesses to harness the power of AI in their operations.

In conclusion, the advancements presented in Google DeepMind’s Motion Prompting paper are poised to redefine the landscape of video editing, offering new opportunities for creativity and efficiency. As we continue to explore the potential of AI in this field, the future of video production looks brighter than ever.