- Updated: February 27, 2026

- 1 min read

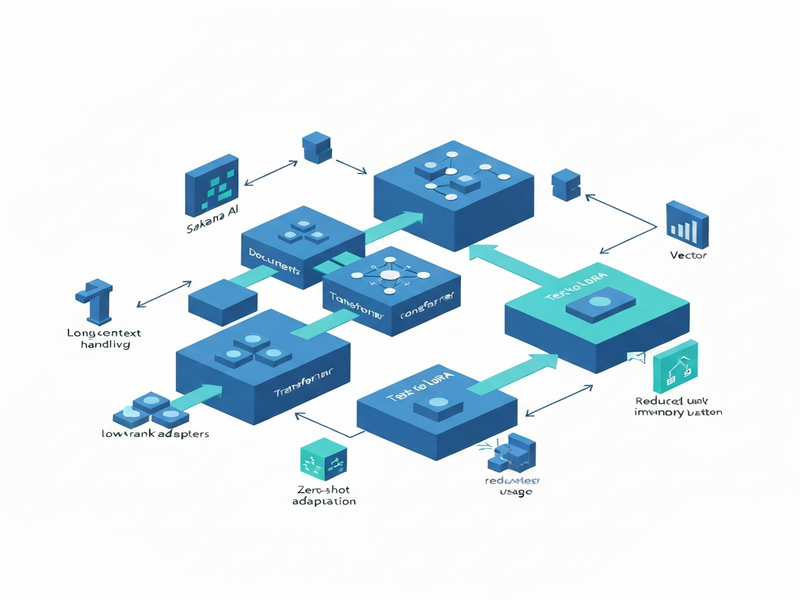

Sakana AI Unveils Doc‑to‑LoRA & Text‑to‑LoRA Hypernetworks for Instant LLM Adaptation

Sakana AI has introduced groundbreaking hypernetwork technologies—Doc‑to‑LoRA and Text‑to‑LoRA—that enable large language models (LLMs) to instantly internalize long‑context documents and adapt to new tasks via zero‑shot natural language prompts.

These methods dramatically reduce latency and memory usage by bypassing the traditional KV‑cache, while maintaining high accuracy across a range of benchmarks. The Doc‑to‑LoRA approach compresses entire documents into LoRA weights, allowing LLMs to retrieve and apply knowledge without re‑reading the source text. Meanwhile, Text‑to‑LoRA translates concise natural‑language task descriptions into LoRA adapters, facilitating rapid, zero‑shot task adaptation.

Key innovations include:

- Instant adaptation with negligible compute overhead.

- Significant reduction in KV‑cache size, enabling longer context windows.

- Cross‑modal knowledge transfer capabilities.

These advances open new possibilities for real‑time AI assistants, on‑device inference, and scalable multi‑task systems.

Read the full original announcement on MarkTechPost.