- Updated: March 10, 2026

- 6 min read

Large Language Models: A Mathematical Formulation

Large language models (LLMs) are neural networks that predict the next token in a sequence using a mathematically grounded probabilistic framework, enabling them to generate coherent text, answer questions, and perform a wide range of language‑understanding tasks.

1. Introduction

Since the release of transformer‑based architectures, large language models have reshaped natural‑language processing (NLP) research and commercial AI deployment. The arXiv paper “Large Language Models: A Mathematical Formulation” provides a rigorous foundation that connects tokenization, probability theory, and optimization. This article translates that theory into a practical guide for researchers, AI engineers, data scientists, and graduate students who need both depth and actionable insight.

Throughout the discussion we will reference the UBOS homepage and related UBOS services that illustrate how LLM theory can be turned into production‑ready solutions.

2. Overview of Large Language Models

LLMs are deep neural networks—typically built on the transformer encoder‑decoder paradigm—that learn a conditional probability distribution P(token_i | token_{1:i‑1}). By scaling parameters (often billions) and training data (terabytes of text), they capture statistical regularities that mimic human language.

- Self‑attention layers enable each token to attend to every other token, providing context‑aware representations.

- Positional encodings preserve order information without recurrent connections.

- Layer normalization and residual connections stabilize training at massive scale.

The UBOS platform overview demonstrates how these components can be assembled with drag‑and‑drop modules, allowing teams to prototype LLM‑powered services without writing low‑level code.

3. Tokenization and Sequence Encoding

Tokenization converts raw text into discrete symbols that the model can process. Modern LLMs rely on subword tokenizers such as Byte‑Pair Encoding (BPE) or SentencePiece, which balance vocabulary size and out‑of‑vocabulary handling.

Key properties:

- Deterministic mapping: The same string always yields the same token sequence.

- Reversibility: Tokens can be detokenized back to the original text (up to whitespace normalization).

- Statistical efficiency: Frequent morphemes become single tokens, reducing sequence length.

In practice, UBOS integrates tokenizers directly into its Web app editor on UBOS, letting developers experiment with custom vocabularies for domain‑specific corpora.

4. Next‑Token Prediction Architecture

The core training objective of an LLM is next‑token prediction. Given a context C = (t_1, …, t_{n‑1}), the model outputs a probability distribution over the vocabulary for the next token t_n:

P(t_n | C) = softmax (W_o · h_n + b_o)

where h_n is the hidden state after processing the context, W_o and b_o are output projection parameters. The loss function is the negative log‑likelihood (cross‑entropy) summed over all positions.

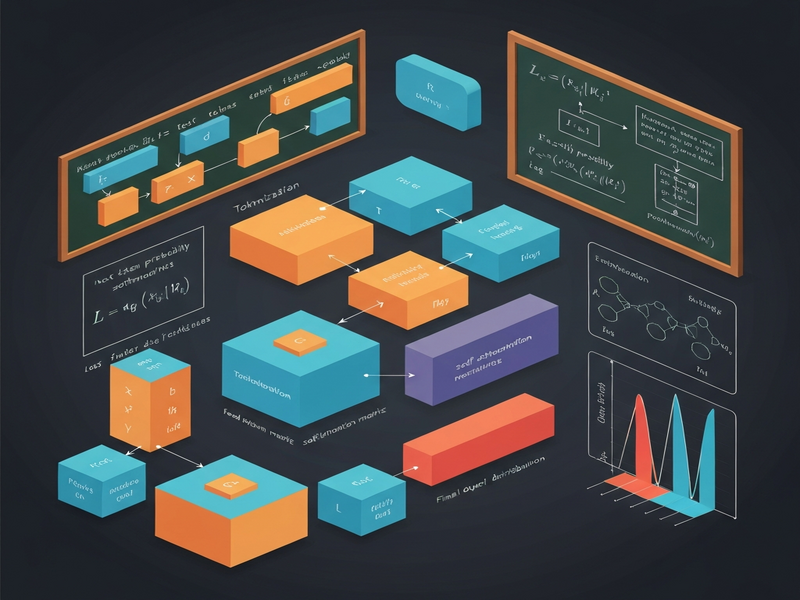

The following illustration (placeholder) visualizes this pipeline:

UBOS’s AI marketing agents leverage this architecture to generate personalized copy in real time, demonstrating the practical impact of next‑token prediction.

5. Learning from Data: Training Objectives and Optimization

Training an LLM is a massive stochastic optimization problem. The standard objective is to minimize the expected cross‑entropy loss over a data distribution D:

L(θ) = 𝔼_{(C,t)∼D}[ -log P_θ(t | C) ]

where θ denotes all model parameters. Optimizers such as AdamW, combined with learning‑rate warm‑up and cosine decay, are the de‑facto standard.

Regularization techniques:

- Weight decay (L2 regularization) to prevent over‑fitting.

- Dropout on attention heads and feed‑forward layers.

- Gradient clipping to stabilize large‑batch training.

For teams that lack GPU clusters, UBOS offers a partner program that provides managed training pipelines, automatically handling data sharding, checkpointing, and hyper‑parameter tuning.

6. Deployment Scenarios and Use Cases

Once trained, LLMs can be served in several ways:

- API endpoint: Stateless inference behind a REST or gRPC interface.

- Embedded inference: On‑device models for latency‑critical applications.

- Hybrid pipelines: Combining retrieval‑augmented generation (RAG) with LLMs for factual accuracy.

Real‑world examples include:

- AI SEO Analyzer – uses LLMs to audit website content and suggest keyword improvements.

- AI Article Copywriter – generates long‑form drafts from brief outlines.

- AI Video Generator – transforms script text into storyboard‑ready video assets.

The Enterprise AI platform by UBOS provides auto‑scaling inference clusters, role‑based access control, and audit logging, making it suitable for regulated industries.

7. Mathematical Insights: Information Theory, Probability, and Optimization

The paper frames LLMs through three lenses:

7.1 Information Theory

The cross‑entropy loss is equivalent to the KL‑divergence between the true data distribution P_data and the model distribution P_θ. Minimizing this divergence maximizes the mutual information between input context and predicted token, effectively compressing linguistic structure.

7.2 Probabilistic Modeling

LLMs instantiate a high‑dimensional categorical distribution. The softmax output can be interpreted as a Gibbs distribution:

P_θ(t | C) = exp(−E_θ(t, C)) / Z(C)

where E_θ is an energy function derived from the transformer’s hidden states, and Z(C) is the partition function (normalization constant). This perspective connects LLMs to energy‑based models and opens avenues for contrastive learning.

7.3 Optimization Geometry

Recent work shows that large‑scale transformer loss landscapes exhibit wide, flat minima that correlate with better generalization. Techniques such as stochastic weight averaging (SWA) and sharpness‑aware minimization (SAM) explicitly seek these regions.

UBOS’s Workflow automation studio lets data scientists chain preprocessing, training, and post‑training analysis steps, making it easy to experiment with SWA or SAM without leaving the UI.

8. Challenges: Accuracy, Efficiency, Robustness

Despite impressive capabilities, LLMs face three major challenges:

- Hallucination: Generating plausible‑looking but factually incorrect statements.

- Compute cost: Inference latency and energy consumption scale super‑linearly with model size.

- Adversarial vulnerability: Small input perturbations can trigger toxic or biased outputs.

Mitigation strategies include retrieval‑augmented generation (RAG), quantization (8‑bit or 4‑bit), and reinforcement learning from human feedback (RLHF). UBOS offers built‑in Chroma DB integration for vector‑based retrieval, reducing hallucination by grounding responses in a curated knowledge base.

For voice‑enabled assistants, the ElevenLabs AI voice integration provides high‑fidelity speech synthesis while allowing developers to enforce content filters at the audio generation stage.

9. Future Directions and Research Opportunities

The mathematical formulation opens several promising research avenues:

- Neural architecture search (NAS) for transformers: Automating the discovery of efficient attention patterns.

- Probabilistic programming integration: Embedding LLMs within Bayesian inference pipelines.

- Energy‑based fine‑tuning: Directly optimizing the energy function for domain adaptation.

- Multimodal extensions: Joint modeling of text, image, and audio using shared latent spaces.

UBOS’s UBOS templates for quick start include a “Multimodal Fusion” starter kit that combines a language model with the AI Image Generator, enabling rapid prototyping of research ideas.

10. Conclusion

The arXiv paper provides a concise yet powerful mathematical lens through which to view LLMs—as probabilistic energy models optimized by large‑scale stochastic gradient descent. Understanding this foundation empowers researchers to push the boundaries of accuracy, efficiency, and safety.

Whether you are building a startup product, an enterprise‑grade AI service, or a graduate‑level research project, the concepts outlined here are directly applicable. Leverage UBOS’s ecosystem— from the pricing plans that fit any budget to the UBOS blog for the latest tutorials—to accelerate your journey from theory to deployment.

“A solid mathematical grounding is the compass that guides practical AI innovation.” – UBOS Research Team

Ready to turn theory into a working product? Explore the UBOS for startups and start building your next LLM‑powered solution today.