- Updated: January 31, 2026

- 1 min read

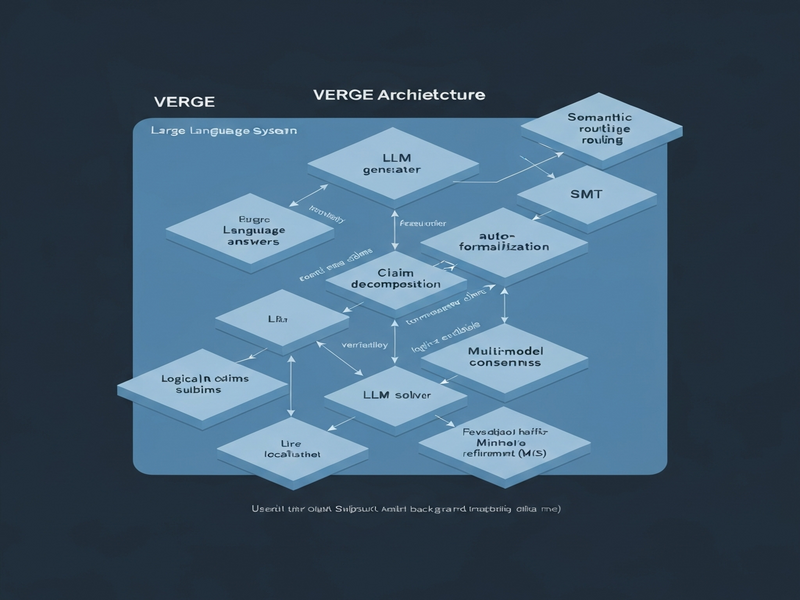

VERGE: Formal Refinement and Guidance Engine for Verifiable LLM Reasoning

VERGE: Formal Refinement and Guidance Engine for Verifiable LLM Reasoning

Large Language Models (LLMs) have demonstrated remarkable fluency, yet their logical correctness remains a critical challenge for high‑stakes applications. The VERGE framework introduces a neurosymbolic approach that tightly integrates LLMs with Satisfiability Modulo Theories (SMT) solvers, delivering verification‑guided answers through iterative refinement.

Key innovations of VERGE include:

- Multi‑model consensus via formal semantic equivalence checking, ensuring logical alignment across candidate answers.

- Semantic routing that directs claims to the appropriate verification strategy—symbolic solvers for logical claims and LLM ensembles for commonsense reasoning.

- Precise error localization using Minimal Correction Subsets (MCS) to pinpoint exact claim fragments that require revision.

The framework classifies claims by logical status, aggregates verification signals into a unified confidence score, and iteratively refines responses until they meet predefined acceptance criteria. Experiments with the GPT‑OSS‑120B model show an average performance uplift of 18.7 % at convergence on standard reasoning benchmarks.

For a deeper dive into the VERGE methodology, explore our internal research page and related resources.

Read the full article on our blog for detailed implementation details and future directions.