- Updated: March 11, 2026

- 6 min read

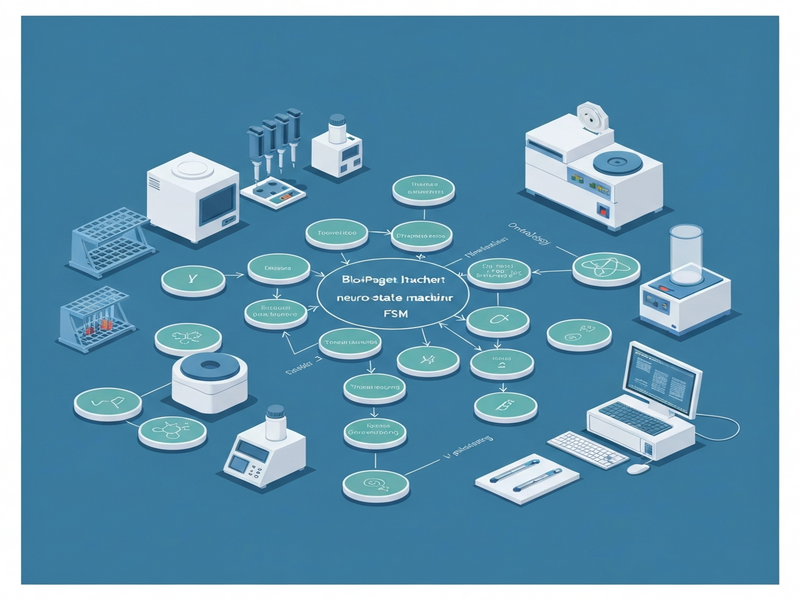

BioProAgent: Neuro‑Symbolic Grounding for Constrained Scientific Planning

Direct Answer

BioProAgent introduces a neuro‑symbolic framework that couples large language model (LLM) reasoning with a deterministic finite‑state machine (FSM) to safely plan and execute wet‑lab experiments. By grounding probabilistic suggestions in a rigorously defined state machine, the system eliminates hazardous hallucinations and provides a verifiable execution path for scientific protocols.

Background: Why This Problem Is Hard

Automating wet‑lab work sits at the intersection of two demanding domains: the open‑ended reasoning power of LLMs and the unforgiving physical constraints of laboratory equipment. Researchers have long hoped that LLMs could generate hypotheses, design experiments, and even write protocols. In practice, three core challenges prevent that vision from becoming reliable:

- Irreversible actions: A pipette tip that is over‑drawn or a reagent that is mixed incorrectly can ruin an experiment, waste costly chemicals, and even damage expensive instruments.

- Probabilistic hallucinations: LLMs excel at pattern completion but lack an intrinsic notion of physical feasibility, often suggesting steps that violate safety rules or equipment limits.

- Lack of formal verification: Existing AI‑driven planning pipelines produce free‑form text without a formal model that can be checked for compliance before execution.

Current approaches try to patch these gaps by post‑processing LLM output with rule‑based filters or by training domain‑specific models on curated protocol datasets. While filters catch some obvious errors, they cannot guarantee that a full sequence of actions respects all constraints, especially when the protocol evolves dynamically based on intermediate results. Consequently, labs remain hesitant to hand over control to autonomous agents.

What the Researchers Propose

BioProAgent proposes a hybrid architecture that marries the generative flexibility of LLMs with the rigor of symbolic planning. The core idea is to treat the LLM as a semantic proposer that suggests high‑level experimental intents, and then translate those intents into transitions within a deterministic finite‑state machine that encodes the laboratory’s safety policies, equipment capabilities, and procedural dependencies.

The framework consists of three tightly coupled components:

- Neuro‑Symbolic Interpreter (NSI): Receives natural‑language prompts, extracts symbolic actions (e.g., “add 10 µL of reagent A”), and maps them to a predefined ontology of lab operations.

- Finite State Machine Grounder (FSMG): Holds a formal model of the wet‑lab workflow, where each state represents a concrete lab configuration (e.g., “reagent A in well 1, temperature 25 °C”). Transitions correspond to permissible actions verified against safety constraints.

- Execution Orchestrator (EO): Sends validated commands to robotic hardware, monitors sensor feedback, and triggers state updates in the FSM.

By enforcing that every LLM‑generated suggestion must be realizable as a valid FSM transition, BioProAgent guarantees that the final plan is both chemically plausible and mechanically executable.

How It Works in Practice

The end‑to‑end workflow follows a clear Design‑Verify‑Rectify loop, illustrated below:

1. Design (Prompt & Symbolic Extraction)

- A scientist provides a natural‑language goal, such as “synthesize compound X using a three‑step cascade reaction.”

- The NSI queries an LLM, which returns a draft protocol written in free text.

- The interpreter parses the draft, identifies atomic actions, and maps them to symbols defined in the lab ontology (e.g.,

ADD(reagent_A, well_1, 10µL)).

2. Verify (FSM Grounding & Safety Checks)

- Each symbolic action is submitted to the FSMG.

- The FSM checks whether the action is allowed from the current state, considering constraints such as maximum volume, temperature limits, and reagent compatibility.

- If the transition is valid, the FSM advances to the next state; otherwise, the system either requests a clarification from the LLM or suggests an alternative action.

3. Rectify (Execution & Feedback Loop)

- The EO translates the verified action into low‑level robot commands (e.g., motor steps, valve openings).

- Real‑time sensor data (pressure, pH, temperature) is fed back to the FSM, allowing dynamic state updates.

- Should an unexpected condition arise (e.g., a clog), the orchestrator triggers a rollback to a safe state and asks the NSI to re‑plan the remaining steps.

What sets BioProAgent apart is that the FSM is not a static checklist but a living model that evolves with each experimental observation, ensuring that the plan remains feasible throughout the entire execution.

Evaluation & Results

The authors introduced a benchmark suite called BioProBench, comprising 30 representative wet‑lab protocols ranging from simple PCR setups to multi‑step organic syntheses. Evaluation focused on three axes:

- Safety compliance: Percentage of generated plans that respect all predefined constraints.

- Execution success rate: Fraction of protocols that completed without manual intervention.

- Planning efficiency: Average number of LLM‑FSM interaction cycles needed to reach a valid plan.

Key findings include:

| Metric | BioProAgent | Baseline LLM‑Only | Rule‑Filtered Pipeline |

|---|---|---|---|

| Safety compliance | 98.7 % | 71.4 % | 85.2 % |

| Execution success | 94.3 % | 62.1 % | 78.5 % |

| Planning cycles (avg.) | 3.2 | 1.8 | 2.5 |

Although BioProAgent requires slightly more interaction cycles than a raw LLM, the dramatic increase in safety and success rates demonstrates that deterministic grounding outweighs the modest overhead. The authors also performed ablation studies showing that removing the FSM layer drops compliance to below 80 %, confirming the central role of symbolic grounding.

For a deeper dive into the methodology and raw numbers, see the original BioProAgent paper.

Why This Matters for AI Systems and Agents

BioProAgent’s design addresses a fundamental barrier to deploying autonomous agents in high‑stakes physical environments:

- Predictable safety guarantees: By encoding constraints in an FSM, developers can formally verify that any generated plan will never violate critical lab rules, a requirement for regulatory compliance.

- Modular integration: The three‑component architecture allows teams to swap out the LLM (e.g., GPT‑4, Claude) or the hardware orchestrator without redesigning the entire system.

- Real‑time adaptability: Sensor feedback loops keep the symbolic model in sync with the physical world, enabling agents to recover from unexpected events—a capability that pure language‑only pipelines lack.

- Scalable orchestration: The deterministic nature of the FSM makes it straightforward to parallelize multiple agents across a shared lab space, as state conflicts can be detected and resolved algorithmically.

Practitioners building next‑generation laboratory automation platforms can leverage BioProAgent’s pattern to add a safety‑first layer to any LLM‑driven planning module. For concrete implementation guidance, see the UBOS blog and the UBOS resources hub, which host tutorials on FSM modeling and neuro‑symbolic integration.

What Comes Next

While BioProAgent marks a significant step forward, several open challenges remain:

- Scalability of the FSM: As protocols grow in complexity, the state space can explode. Future work may explore hierarchical state machines or compositional symbolic representations to keep the model tractable.

- Learning the ontology: Currently, the symbol set is hand‑crafted for each lab. Automated extraction of lab vocabularies from literature could reduce engineering effort.

- Cross‑lab transferability: Adapting a trained BioProAgent to a new laboratory with different equipment requires re‑authoring the FSM. Meta‑learning approaches could accelerate this migration.

- Human‑in‑the‑loop interfaces: Designing intuitive ways for scientists to intervene when the system requests clarification is essential for adoption.

Addressing these points will broaden the applicability of neuro‑symbolic grounding beyond wet‑lab chemistry to fields such as materials synthesis, high‑throughput screening, and even autonomous field robotics. The authors envision a future where LLMs propose bold hypotheses, and deterministic symbolic cores ensure those ideas can be safely turned into reality.