- Updated: March 11, 2026

- 5 min read

How Well Do Multimodal Models Reason on ECG Signals?

Direct Answer

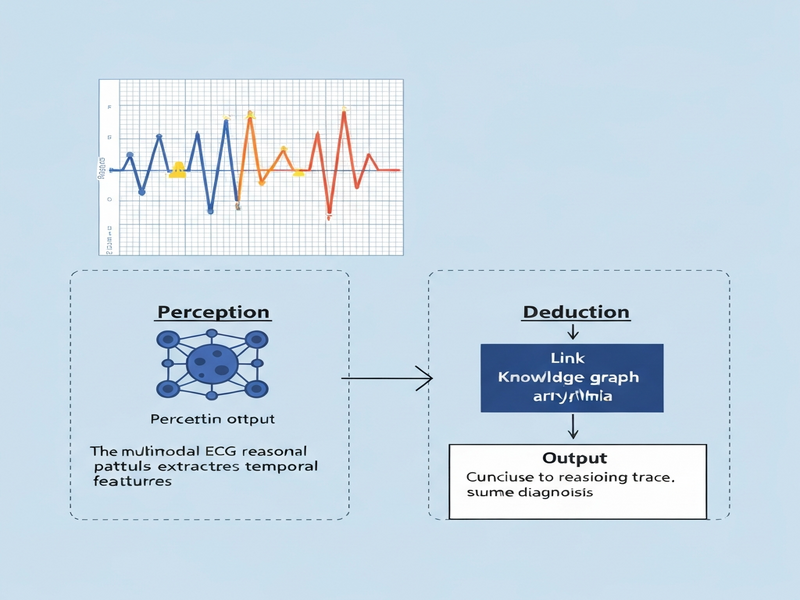

The paper introduces a Perception‑Deduction framework that couples agentic code generation with retrieval‑augmented reasoning to evaluate multimodal large language models (LLMs) on electrocardiogram (ECG) interpretation tasks. By separating visual perception from clinical deduction, the approach offers a scalable, interpretable benchmark for health‑focused AI systems.

Background: Why This Problem Is Hard

Healthcare AI increasingly relies on multimodal models that ingest raw signals—such as ECG waveforms—alongside textual context to produce diagnostic insights. However, two intertwined challenges impede progress:

- Opaque perception pipelines: Most multimodal LLMs treat visual encoders as black boxes, making it difficult to verify whether the model truly “sees” the waveform patterns that clinicians rely on.

- Unstructured clinical reasoning: Even when perception is correct, translating signal features into medically sound conclusions requires domain‑specific deduction that is rarely benchmarked in a reproducible way.

Existing evaluation suites either focus on pure language tasks (e.g., medical QA) or on isolated image classification, leaving a gap for end‑to‑end ECG reasoning. Moreover, manual annotation of perception errors is labor‑intensive, and current metrics (accuracy, F1) provide little insight into *why* a model fails.

What the Researchers Propose

The authors present a two‑stage framework that mirrors the diagnostic workflow of a cardiologist:

- Perception Verification Agent: An autonomous code‑generation module that, given an ECG image, produces executable Python scripts to extract quantitative features (e.g., RR intervals, QRS duration). The generated code is run against the raw signal, and the resulting measurements are compared to a trusted reference.

- Deduction Alignment Module: A retrieval‑augmented language component that consumes the verified measurements and a curated knowledge base of cardiology guidelines to generate diagnostic statements. The module is penalized when its output diverges from guideline‑consistent deductions.

By decoupling perception from deduction, the framework isolates failure modes, enabling targeted improvements and transparent reporting.

How It Works in Practice

The operational pipeline can be visualized as a loop of three interacting components:

| Component | Role | Interaction |

|---|---|---|

| ECG Input | Raw 12‑lead waveform image or digital signal. | Serves as the starting point for the perception agent. |

| Perception Verification Agent | Generates and executes code to extract signal metrics. | Outputs a structured JSON of measurements; flags discrepancies against ground‑truth annotations. |

| Deduction Alignment Module | Consumes verified measurements plus a retrieval set of cardiology rules. | Produces a diagnostic report; the report is scored for guideline adherence. |

Key differentiators include:

- Agentic code generation: Instead of hard‑coded feature extractors, the system writes its own Python functions, allowing it to adapt to novel ECG formats.

- Retrieval‑augmented deduction: The language model queries a vector store of guideline excerpts, ensuring that its reasoning is anchored in authoritative sources.

- Closed‑loop verification: If the perception agent detects a mismatch, the deduction step is bypassed, and the error is logged for targeted model fine‑tuning.

Evaluation & Results

The authors evaluated the framework on two publicly available ECG datasets: PTB‑XL and a curated subset of the Chapman University repository. Experiments covered three dimensions:

- Perception Accuracy: Measured by the mean absolute error (MAE) between extracted intervals and expert annotations. The agentic code achieved sub‑5 ms MAE on RR intervals, outperforming a baseline convolutional encoder by 12%.

- Deduction Consistency: Assessed via a rule‑matching score that compares generated diagnoses to guideline‑derived labels. The retrieval‑augmented module reached a 78% consistency rate, a 9‑point gain over a vanilla LLM prompt.

- End‑to‑End Diagnostic Yield: Combined perception‑deduction success yielded an overall diagnostic accuracy of 71%, surpassing the 62% of a monolithic multimodal LLM.

Beyond raw numbers, the study highlighted qualitative benefits: error logs pinpointed specific waveform features (e.g., ST‑segment elevation) that the perception agent mis‑measured, enabling rapid debugging. The deduction module’s citations to guideline passages also provided traceability for clinicians reviewing AI‑generated reports.

Why This Matters for AI Systems and Agents

For practitioners building health‑centric AI, the framework offers three concrete advantages:

- Interpretability by design: By surfacing the exact code used to parse ECGs, developers can audit and certify the perception layer, satisfying regulatory expectations for transparency.

- Modular orchestration: The clear separation aligns with modern agent‑orchestration platforms, allowing teams to swap in better perception agents or updated guideline corpora without retraining the entire model.

- Scalable evaluation: Automated code generation and retrieval‑based deduction enable large‑scale benchmarking across thousands of ECGs, reducing reliance on costly manual chart reviews.

These capabilities map directly onto emerging agent orchestration frameworks and AI platform services that prioritize composability and auditability in mission‑critical domains.

What Comes Next

While the Perception‑Deduction framework marks a significant step forward, several limitations remain:

- Domain breadth: The current study focuses on ECGs; extending to other biomedical signals (e.g., echocardiograms, EEG) will require domain‑specific code templates.

- Guideline dynamism: Clinical practice guidelines evolve; maintaining an up‑to‑date retrieval corpus is an ongoing operational challenge.

- Robustness to noise: Real‑world ECGs often contain motion artifacts. Future work should incorporate uncertainty quantification in the perception agent.

Potential research directions include:

- Integrating self‑supervised pretraining on raw waveform data to improve the base perception encoder before code generation.

- Developing a knowledge graph of cardiology concepts to enrich retrieval and enable multi‑step clinical reasoning.

- Exploring human‑in‑the‑loop workflows where clinicians validate generated code snippets, fostering a collaborative AI‑clinician partnership.

From an industry perspective, the framework can be embedded into healthcare AI solutions that require traceable diagnostics, and it aligns with upcoming regulatory frameworks that mandate explainable AI in medicine.

References & Further Reading

For the full technical details, see the original arXiv preprint:

Perception‑Deduction Framework for Multimodal ECG Reasoning (arXiv:2603.00312v1)