- Updated: March 10, 2026

- 2 min read

Do Open-Vocabulary Detectors Transfer to Aerial Imagery? A Comparative Evaluation

Do Open-Vocabulary Detectors Transfer to Aerial Imagery? A Comparative Evaluation

Abstract: Open‑vocabulary object detection (OVD) enables zero‑shot recognition of novel categories through vision‑language models, achieving strong performance on natural images. However, its transferability to aerial imagery has remained unexplored. In this article we present the first systematic benchmark evaluating five state‑of‑the‑art OVD models on the LAE‑80C aerial dataset (3,592 images, 80 categories) under strict zero‑shot conditions.

Key Findings

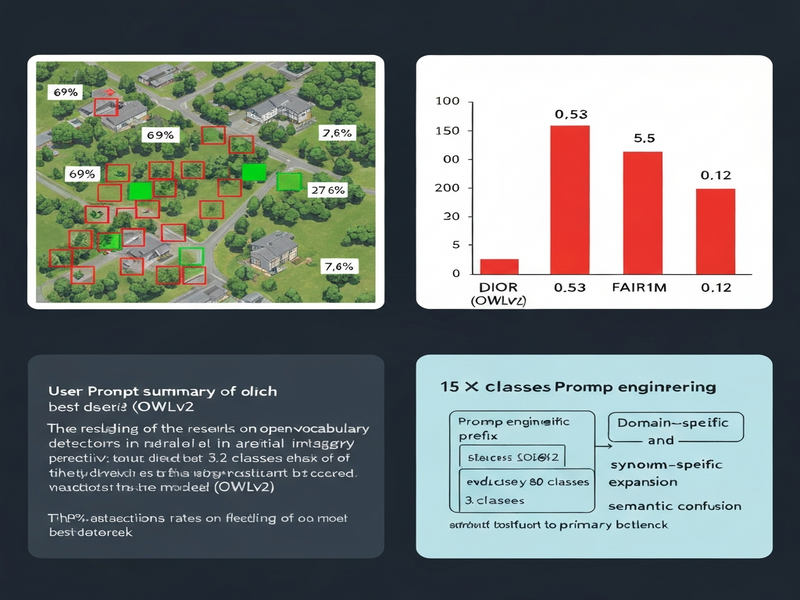

- Severe domain transfer failure: the best model (OWLv2) achieves only 27.6% F1‑score with a 69% false positive rate.

- Reducing the vocabulary from 80 to 3.2 classes yields a 15× improvement, indicating that semantic confusion is the primary bottleneck.

- Prompt‑engineering strategies such as domain‑specific prefixing and synonym expansion provide negligible gains.

- Performance varies dramatically across datasets (F1: 0.53 on DIOR, 0.12 on FAIR1M), exposing brittleness to imaging conditions.

Methodology

We isolated semantic confusion from visual localization using three inference modes: Global, Oracle, and Single‑Category. The benchmark follows strict zero‑shot protocols, ensuring no fine‑tuning on aerial data.

Implications for Practitioners

These results highlight the need for domain‑adaptive approaches when deploying OVD models in aerial applications such as remote sensing, surveillance, and environmental monitoring. Researchers should focus on reducing semantic ambiguity and improving cross‑domain generalization.

Further Reading

For an in‑depth discussion, visit our internal resources:

Stay tuned for upcoming updates on domain‑adaptive OVD solutions.