- Updated: January 30, 2026

- 7 min read

Cross-Session Decoding of Neural Spiking Data via Task‑Conditioned Latent Alignment

Cross-Session Decoding of Neural Spiking Data via Task‑Conditioned Latent Alignment

Direct Answer

The paper introduces Task‑Conditioned Latent Alignment (TCLA), a transfer‑learning framework that aligns latent neural representations across recording sessions by conditioning on the intended task, dramatically improving cross‑session decoding when only a handful of target‑session spikes are available. This matters because it tackles one of the most persistent bottlenecks in brain‑computer interface (BCI) research—maintaining decoder performance over time without exhaustive retraining.

Background: Why This Problem Is Hard

Neural decoding for BCIs relies on mapping high‑dimensional spiking activity to behavioral variables such as limb velocity or eye movement. In practice, the neural signal distribution drifts across days due to electrode micromotion, tissue response, and changes in the animal’s internal state. Consequently, a decoder trained on one session often fails on the next, forcing researchers to collect large labeled datasets for each new session—a costly and time‑consuming process.

Existing mitigation strategies fall into two broad categories:

- Domain adaptation techniques that align feature spaces using unsupervised statistics, but they typically ignore the semantic context of the task, leading to ambiguous alignments.

- Supervised fine‑tuning that retrains the decoder with a few labeled trials from the new session; however, the limited data often under‑determines the model, causing overfitting and unstable performance.

Both approaches struggle because they treat neural variability as a generic nuisance rather than a structured shift that is tightly coupled to the behavioral intent. In real‑world BCI deployments—whether for prosthetic control, neurorehabilitation, or closed‑loop neuromodulation—engineers need a solution that can quickly adapt to new sessions while preserving the rich task‑specific information encoded in the neural population.

What the Researchers Propose

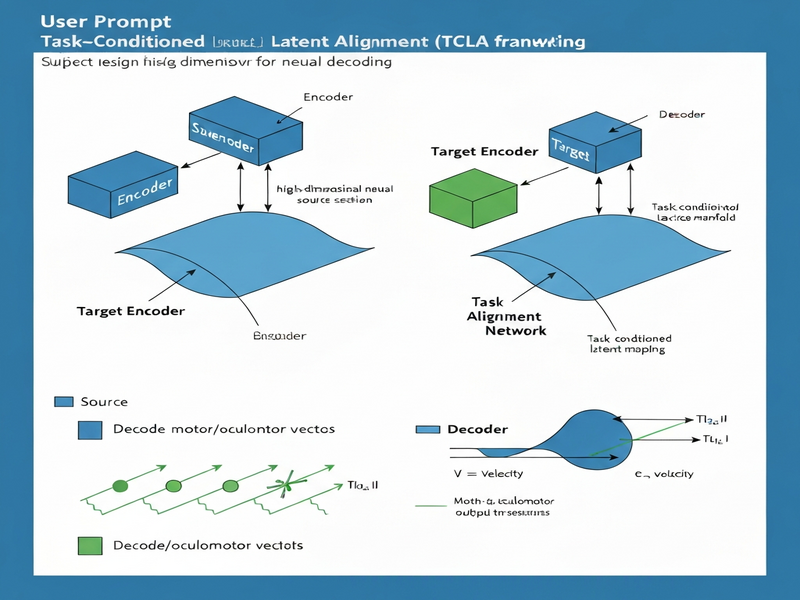

The authors propose a novel framework called Task‑Conditioned Latent Alignment (TCLA). At a conceptual level, TCLA consists of three interacting modules:

- Task Encoder: A lightweight network that ingests high‑level task descriptors (e.g., “reach left”, “saccade up”) and produces a task embedding.

- Session‑Specific Autoencoder: An unsupervised encoder–decoder pair that compresses raw spiking activity into a low‑dimensional latent space for each recording session.

- Latent Alignment Module: A conditioning mechanism that aligns the session‑specific latent vectors to a shared, task‑aware manifold using the task embedding as a guide.

By explicitly conditioning the alignment on the intended behavior, TCLA forces the latent space to preserve task‑relevant structure while discarding session‑specific noise. The result is a unified representation that can be decoded with a single downstream regressor, regardless of the day on which the spikes were recorded.

How It Works in Practice

The operational workflow of TCLA can be broken down into four stages:

- Data Collection: For each session, researchers record spiking activity from motor or oculomotor cortex while the subject performs a set of predefined tasks.

- Session Autoencoding: The raw spike trains are fed into the session‑specific autoencoder, which learns to reconstruct the input from a compact latent vector. This step captures the intrinsic statistical structure of that day’s neural activity.

- Task Conditioning: Simultaneously, the task encoder transforms the known task label into a dense embedding. The latent alignment module then adjusts the session latent vector toward the shared manifold, guided by the task embedding.

- Unified Decoding: A single linear or shallow non‑linear decoder, trained on a small set of labeled trials from the target session, maps the aligned latent vectors to behavioral outputs (e.g., hand velocity).

The key differentiator of TCLA is the conditioning step: rather than aligning latent spaces solely on statistical similarity, it leverages the semantic anchor provided by the task. This reduces the amount of target‑session data needed for effective alignment and prevents the model from conflating unrelated neural drift with genuine task signals.

Evaluation & Results

The authors validated TCLA on two benchmark datasets collected from macaque monkeys:

- Motor Cortex Dataset: Spike recordings during a center‑out reaching task across multiple days.

- Oculomotor Cortex Dataset: Spike recordings during saccadic eye movements with varying target locations.

For each dataset, they simulated a realistic cross‑session scenario by training a base decoder on an early session and then testing on later sessions with only 5–10 labeled trials available for fine‑tuning. The evaluation metrics included:

- Coefficient of determination (R²) for continuous kinematic variables.

- Mean angular error for directional decoding.

- Stability index measuring performance degradation over successive days.

Key findings:

- Motor task: TCLA achieved an average R² improvement of 0.18 over standard fine‑tuned linear decoders, reaching >0.70 where baseline models fell below 0.55 after three days.

- Oculomotor task: Mean angular error dropped from 28° (baseline) to 12° with TCLA, a 57% reduction.

- The alignment module consistently reduced performance drift, maintaining >85% of the initial session’s accuracy after a week of recordings.

These results demonstrate that TCLA not only recovers lost performance with minimal new data but also stabilizes decoding across long‑term experiments, a critical requirement for any practical BCI system.

Why This Matters for AI Systems and Agents

From an engineering perspective, TCLA offers several practical advantages that directly impact the design and deployment of AI‑driven neurotechnology:

- Data Efficiency: By leveraging task semantics, developers can dramatically cut the amount of labeled data needed for each new session, reducing experimental overhead and accelerating iterative design cycles.

- Modular Integration: The three‑module architecture fits naturally into existing BCI pipelines. The session autoencoder can be swapped for any encoder that matches the hardware’s sampling rate, while the task encoder can be extended to richer contextual cues (e.g., intent signals from language models).

- Robustness to Non‑Stationarity: Aligning latent spaces rather than raw spikes mitigates electrode drift, a perennial challenge in chronic implants. This robustness translates to longer device lifetimes and fewer surgical revisions.

- Scalable to Multi‑Task Settings: Because the task embedding is explicit, the same alignment framework can support a repertoire of behaviors without retraining separate decoders for each, simplifying the software stack for multi‑modal agents.

For teams building autonomous agents that rely on neural feedback—such as closed‑loop prosthetic controllers or neuroadaptive AI assistants—TCLA provides a principled way to keep the neural interface stable while the agent’s higher‑level policies evolve. Learn more about our BCI solutions at ubos.tech.

What Comes Next

While TCLA marks a significant step forward, the authors acknowledge several limitations that open avenues for future research:

- Task Representation Granularity: Current experiments use discrete task labels. Extending the task encoder to handle continuous intent vectors or hierarchical task structures could broaden applicability to more complex behaviors.

- Real‑Time Constraints: The alignment process adds computational overhead. Optimizing the module for low‑latency inference on edge hardware is essential for closed‑loop applications.

- Generalization Beyond Motor Domains: Testing TCLA on sensory decoding (e.g., auditory or somatosensory cortex) and on human clinical datasets will validate its universality.

- Integration with Reinforcement Learning Agents: Embedding the aligned latent space as a state representation for RL agents could enable adaptive neuro‑feedback loops that co‑learn with the user.

Addressing these challenges will likely involve cross‑disciplinary collaborations between neuroscientists, machine‑learning researchers, and hardware engineers. The framework’s modular nature makes it a strong candidate for such joint efforts.

For a deeper dive into the methodology, full experimental details, and code repositories, consult the original preprint: Task‑Conditioned Latent Alignment for Cross‑Session Neural Decoding. Additional resources, including implementation guides and related blog posts, are available on ubos.tech.

Read more about our research and solutions at UBOS Tech.