- Updated: January 24, 2026

- 2 min read

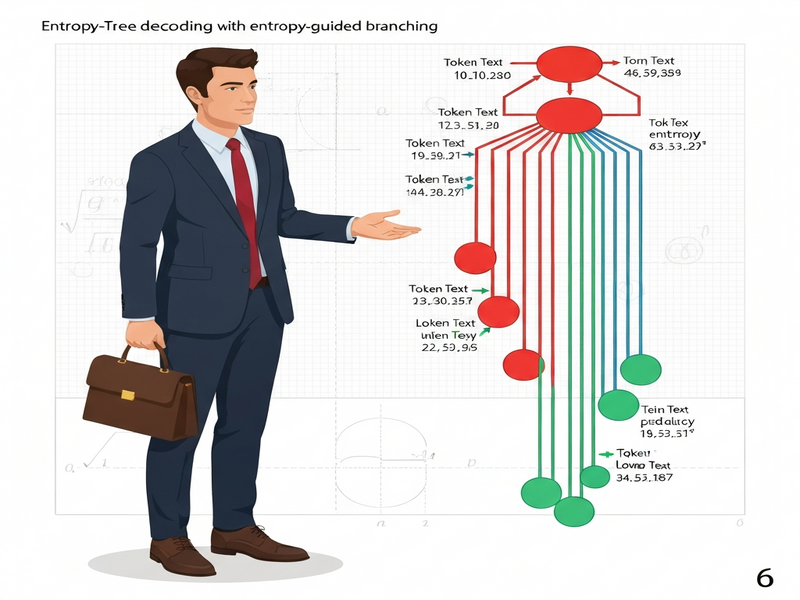

Entropy-Tree: Tree‑Based Decoding with Entropy‑Guided Exploration

Entropy-Tree: Tree‑Based Decoding with Entropy‑Guided Exploration

Abstract: Large language models (LLMs) achieve impressive reasoning performance, yet conventional decoding strategies either explore blindly (random sampling) or redundantly (independent multi‑sampling). Entropy‑Tree introduces a tree‑based decoding method that leverages model entropy as a signal for branching decisions—expanding the search tree only at positions where the model exhibits genuine uncertainty. This approach yields superior accuracy and calibration on reasoning benchmarks, outperforming Multi‑chain and demonstrating better AUROC for predictive entropy.

Key Contributions

- Entropy‑guided branching that reduces unnecessary exploration.

- Unified framework for efficient structured search and reliable uncertainty estimation.

- State‑of‑the‑art performance on multiple reasoning datasets (higher pass@k scores).

Method Overview

The Entropy‑Tree algorithm constructs a decoding tree where each node represents a partial token sequence. At each step, the model’s predictive entropy is computed. If the entropy exceeds a predefined threshold, the algorithm branches, generating multiple candidate continuations; otherwise, it proceeds deterministically. This selective expansion balances exploration depth with computational cost.

Experimental Results

Extensive experiments on benchmarks such as GSM‑8K, MathQA, and CommonsenseQA show that Entropy‑Tree consistently improves pass@k metrics across various model sizes (e.g., LLaMA‑13B, GPT‑4). Moreover, the entropy‑based confidence scores correlate strongly with correctness, offering better calibration than traditional metrics like log‑probability.

Implications for AI Research

By integrating uncertainty quantification directly into the decoding process, Entropy‑Tree opens new avenues for trustworthy AI systems, especially in high‑stakes reasoning tasks where confidence estimation is crucial.

Further Reading

Read the full paper on arXiv and explore related resources on UBOS Research.

Categories: Research, AI, LLM Decoding