cognee - Memory for AI Agents in 5 lines of code

Demo . Learn more · Join Discord

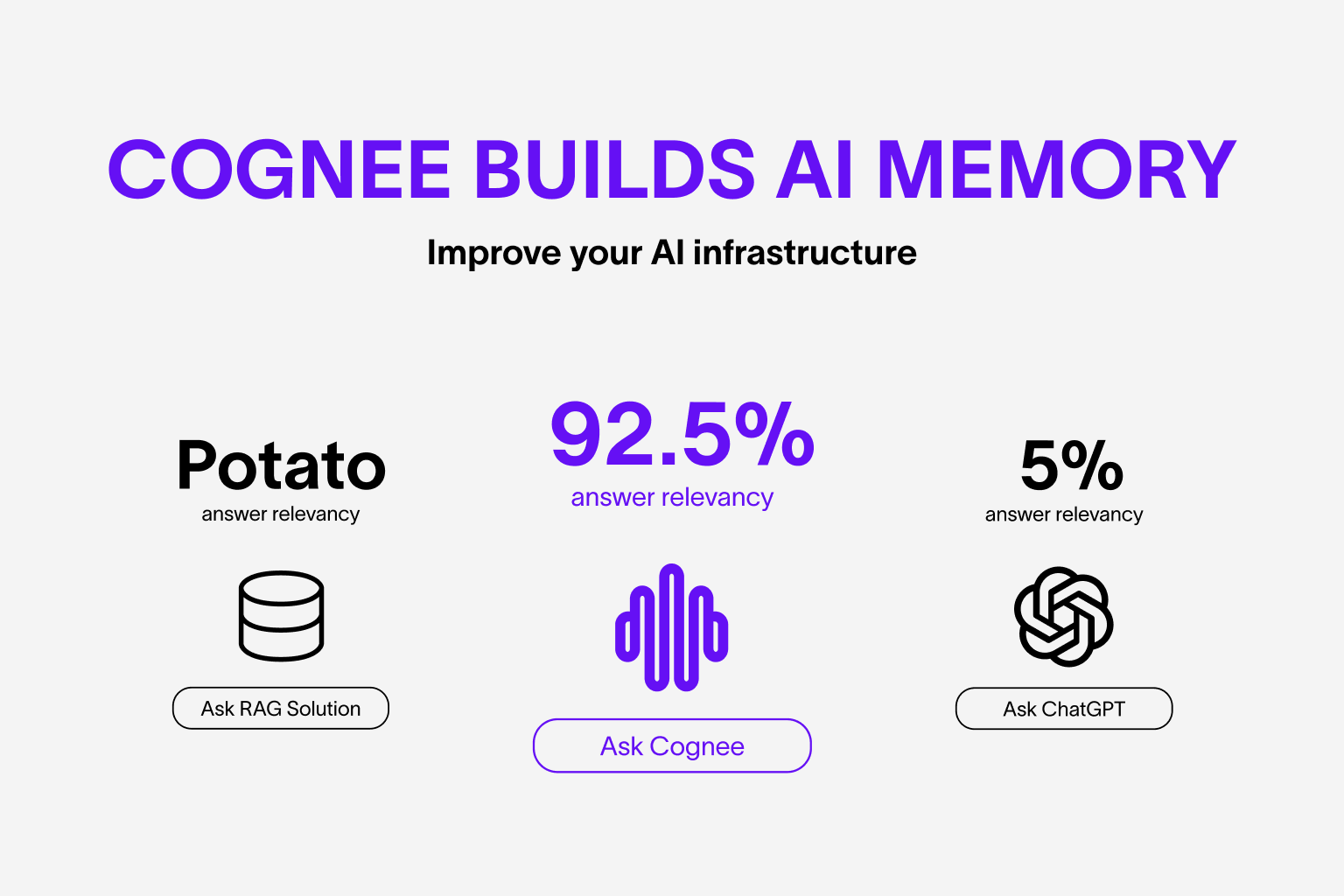

Build dynamic Agent memory using scalable, modular ECL (Extract, Cognify, Load) pipelines.

More on use-cases and evals

Features

- Interconnect and retrieve your past conversations, documents, images and audio transcriptions

- Reduce hallucinations, developer effort, and cost.

- Load data to graph and vector databases using only Pydantic

- Manipulate your data while ingesting from 30+ data sources

Get Started

Get started quickly with a Google Colab notebook or starter repo

Contributing

Your contributions are at the core of making this a true open source project. Any contributions you make are greatly appreciated. See CONTRIBUTING.md for more information.

📦 Installation

You can install Cognee using either pip, poetry, uv or any other python package manager.

With pip

pip install cognee

💻 Basic Usage

Setup

import os

os.environ["LLM_API_KEY"] = "YOUR OPENAI_API_KEY"

You can also set the variables by creating .env file, using our template. To use different LLM providers, for more info check out our documentation

Simple example

This script will run the default pipeline:

import cognee

import asyncio

async def main():

# Add text to cognee

await cognee.add("Natural language processing (NLP) is an interdisciplinary subfield of computer science and information retrieval.")

# Generate the knowledge graph

await cognee.cognify()

# Query the knowledge graph

results = await cognee.search("Tell me about NLP")

# Display the results

for result in results:

print(result)

if __name__ == '__main__':

asyncio.run(main())

Example output:

Natural Language Processing (NLP) is a cross-disciplinary and interdisciplinary field that involves computer science and information retrieval. It focuses on the interaction between computers and human language, enabling machines to understand and process natural language.

Graph visualization:

Open in browser.

Open in browser.

For more advanced usage, have a look at our documentation.

Understand our architecture

Demos

- What is AI memory:

Learn about cognee

- Simple GraphRAG demo

Simple GraphRAG demo

- cognee with Ollama

cognee with local models

Code of Conduct

We are committed to making open source an enjoyable and respectful experience for our community. See CODE_OF_CONDUCT for more information.

💫 Contributors

Star History

Cognee

Project Details

- topoteretes/cognee

- Apache License 2.0

- Last Updated: 4/22/2025

Recomended MCP Servers

Host an Model Context Protocol SSE deployment on Cloud Run, Authenticating with IAM.

Agentic abstraction layer for building high precision vertical AI agents written in python for Model Context Protocol.

支持SSE,STDIO;不仅止于mysql的增删改查功能; 还包含了数据库异常分析能力;且便于开发者们进行个性化的工具扩展 Support for SSE, STDIO in MySQL MCP server mcp_mysql_server_pro is not just about MySQL CRUD operations,...

AI-powered local MCP server for terminal commands, surgical file editing, process management, and intelligent codebase exploration. FastMCP-powered, file...

This tool captures browser console logs and makes them available to Cursor IDE through the Model Context Protocol...

StarRocks MCP (Model Context Protocol) Server

This is MCP server for Claude that gives it terminal control, file system search and diff file editing...

Ancestry MCP server made with Python that allows interactability with .ged (GEDCOM) files

An MCP (Model Context Protocol) server for interacting with a Paperless-NGX API server. This server provides tools for...

A powerful Model Context Protocol (MCP) server that provides an all-in-one solution for public web access.